TL;DR:

- Accurate project sizing prevents missed deadlines and budget overruns by establishing reliable estimates.

- Effective sizing involves breaking down tasks, selecting suitable methods, and involving the right team members.

- Human biases and organizational pressures significantly impact estimation accuracy, emphasizing the need for calibration and honesty.

Missed deadlines and budget overruns rarely happen because developers write bad code. They happen because teams start building before anyone has a credible answer to the question: how much work is this, really? Inaccurate project sizing cascades quickly, turning a three-month roadmap into a six-month fire drill and a $200,000 budget into a $340,000 regret. Mastering the project sizing process gives product managers and business stakeholders a structured way to convert ambiguous requirements into reliable estimates, reducing risk before a single sprint begins. This article covers the core methodologies, how to run an effective sizing session, a step-by-step execution guide, and the most common pitfalls that derail even experienced teams.

Table of Contents

- What is the project sizing process?

- Preparing for a project sizing session

- Step-by-step: How to size a software project

- Common pitfalls and how to verify your sizing

- The uncomfortable truth about project sizing: Human bias, not just math

- Estimate smarter: Tools for effortless project sizing

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Break down large tasks | Decompose work into items smaller than 5 days to increase sizing accuracy. |

| Mix methods for best results | Combine rapid relative sizing with detailed bottom-up or PERT for complex projects. |

| Account for uncertainty | Always add a 15-20% management reserve to your estimates for risk and buffer. |

| Calibrate with real data | Track estimates versus actuals and tweak your process using sprint retrospectives. |

What is the project sizing process?

Project sizing is the practice of estimating the scope, effort, and complexity of a software project before committing resources or timelines. It sits at the intersection of project planning and risk management, giving teams a quantified basis for decisions that would otherwise rely on intuition. Without it, resource allocation becomes guesswork, budget control is reactive, and delivery predictability is essentially nonexistent.

Understanding software sizing essentials is foundational before selecting any specific technique. Project sizing uses methods like T-shirt sizing, PERT, and bottom-up estimation, each suited to different project stages and levels of requirement clarity.

| Method | Best timeframe | Primary use |

|---|---|---|

| T-shirt sizing | Early discovery | Relative complexity ranking |

| Planning Poker | Sprint planning | Team consensus on story points |

| PERT | Uncertain tasks | Weighted average with risk range |

| Bottom-up estimation | Detailed planning | Task-level hour aggregation |

| Reference class | Any stage | Calibration against historical data |

Sizing is not a one-time event. Teams need it across multiple contexts:

- Sprint planning: Confirming backlog items fit within a sprint capacity

- Annual budgeting: Translating a product roadmap into a funding request

- Stakeholder reporting: Communicating delivery confidence and scope risk

- Contract negotiation: Setting fixed-price or time-and-materials boundaries

- Vendor evaluation: Comparing internal vs. outsourced delivery costs

Knowing how to estimate team size accurately is equally important, since effort estimates directly inform headcount and cost projections.

Preparing for a project sizing session

Having defined the purpose and basics, it is critical to lay the groundwork for an effective sizing session. A poorly prepared session produces numbers that feel authoritative but carry no real accuracy. The inputs matter as much as the method.

Before the session begins, gather the following: finalized or draft user stories and epics, any available historical velocity data from prior sprints, a defined scope boundary (what is in and what is explicitly out), and access to technical documentation if architecture decisions affect effort. Missing any of these increases estimation variance significantly.

Who attends also shapes the outcome. The right room includes the product manager, at least one senior engineer or tech lead, a QA representative, and, for larger initiatives, a solution architect. Stakeholders can attend for context but should not drive consensus.

Workshops and team consensus methods improve estimation trust and accuracy, which is why structured facilitation matters more than most teams realize.

| Tool/method | Strength | Limitation |

|---|---|---|

| Planning Poker | High team buy-in | Slower for large backlogs |

| T-shirt sizing | Fast, visual | Low precision |

| PERT | Handles uncertainty | Requires three-point input |

| Bottom-up | High accuracy | Time-intensive |

| Reference class | Grounded in reality | Needs historical data |

To set up a productive session, follow these steps:

- Define and document the scope boundary before sending invites

- Select the sizing method appropriate to the project stage

- Gather all relevant user stories, epics, and technical constraints

- Invite the right participants and assign a neutral facilitator

- Set a clear agenda with time-boxed rounds for each backlog item

Pro Tip: Keep individual backlog items below five days of estimated effort. Anything larger should be decomposed further. Smaller items produce tighter consensus and reduce the risk of hidden complexity.

Common prep pitfalls include starting the session with vague requirements, missing the QA or infrastructure perspective, and skipping the agenda entirely. Using estimation consensus methods and running estimation workshops with retrospective data from previous cycles significantly improves calibration over time.

Step-by-step: How to size a software project

With your session prepped and the right team gathered, here is how to execute sizing in a structured way.

Break down large items, use PERT for uncertainty, relative sizing for speed, and add management reserves. These principles translate into the following process:

- Identify and clarify requirements: Review each user story or epic with the team. Flag ambiguities and resolve them before estimating.

- Decompose into small tasks: Break epics into stories and stories into tasks. No single task should exceed five days.

- Select a sizing method: For early-stage work, use T-shirt sizing. For sprint-ready backlog items, use Planning Poker or bottom-up.

- Facilitate team estimation: Run structured rounds. For Planning Poker, reveal cards simultaneously to prevent anchoring.

- Review and adjust: Discuss outliers. A developer estimating eight points while others estimate two is a signal, not a problem. Resolve it through discussion.

- Add reserves for uncertainty: Apply a management reserve of 15 to 20% on top of the base estimate to account for unknown risks.

- Document and share: Record estimates, assumptions, and exclusions. Distribute to all stakeholders within 24 hours.

For tasks with high uncertainty, PERT (Program Evaluation and Review Technique) provides a weighted average: (Optimistic + 4 x Most Likely + Pessimistic) divided by 6. This formula smooths extreme scenarios into a defensible single-point estimate.

No item should be estimated at more than 5 days. Break it down further to increase accuracy.

Pro Tip: After each sprint, compare estimated versus actual effort. Track this ratio over time. Teams that run structured retrospectives using team estimation tips typically close the accuracy gap within three to four cycles. Understanding leveraging effort estimation at the task level also prevents the common mistake of estimating only development time while ignoring design, QA, and deployment effort.

Common pitfalls and how to verify your sizing

Even with a solid process, pitfalls abound. Here is how to spot and correct them.

Size inflation and poor decomposition cause inaccurate estimates. Tracking actuals versus estimates regularly is the most reliable corrective mechanism available to any team.

The most frequent sizing errors include:

- Size inflation: Teams pad estimates defensively without documenting the rationale, making future calibration impossible

- Insufficient decomposition: Large, vague tasks hide complexity and produce wide variance in team estimates

- Ignoring non-coding time: Design typically consumes 10 to 15% of total effort; deployment and environment setup add another 5%; omitting these creates systematic underestimation

- Skipping historical calibration: Estimating in a vacuum, without reference to how similar features performed in previous sprints, removes the most grounding input available

- Single-person estimates: Estimates produced by one person, even a senior engineer, carry individual bias that team-based methods naturally correct

Statistic callout: Tracking velocity after three sprints improves estimate accuracy to approximately ±20%, a significant reduction from the ±50% typical of initial estimates.

To verify sizing quality, compare estimated story points or hours against actuals at the end of each sprint. Calculate a velocity ratio (actual divided by estimated) and track it over time. A ratio consistently above 1.0 signals systematic underestimation; below 1.0 suggests padding. Both patterns are correctable once visible.

Holding post-sprint retrospectives focused specifically on estimation quality, separate from the general sprint retrospective, accelerates calibration. Teams that invest in avoiding estimation mistakes and build a culture of honest post-mortems typically reach stable velocity within two to three months. Using project estimator tools to benchmark estimates against industry norms also helps teams identify whether their sizing is in a reasonable range before committing to stakeholders.

The uncomfortable truth about project sizing: Human bias, not just math

With the nuts and bolts of the process covered, it is essential to question what textbooks leave out. Most guidance on project sizing treats the problem as methodological: choose the right formula, follow the right steps, and accuracy follows. That framing is incomplete.

Humans are rarely more than 1% accurate in their initial estimates, while LLM-assisted tools show variable performance depending on context. The implication is that no method, however rigorous, compensates for a team that is not calibrated against its own historical performance.

Optimism bias leads engineers to estimate for ideal conditions. Anchoring causes the first number mentioned in a room to pull all subsequent estimates toward it. Organizational pressure, when a manager signals a preferred timeline before estimation begins, corrupts the process entirely. These are not edge cases. They are the default state of most sizing sessions.

Sizing improvement comes less from better math than from honest feedback and team recalibration.

Machine learning tools are beginning to assist with pattern recognition across large project datasets, but they require clean historical data that most organizations do not maintain. Human review remains the critical check. The teams that size most accurately are not the ones using the most sophisticated tools. They are the ones that run consistent estimation workshops, document their assumptions honestly, and treat every retrospective as a calibration event rather than a formality.

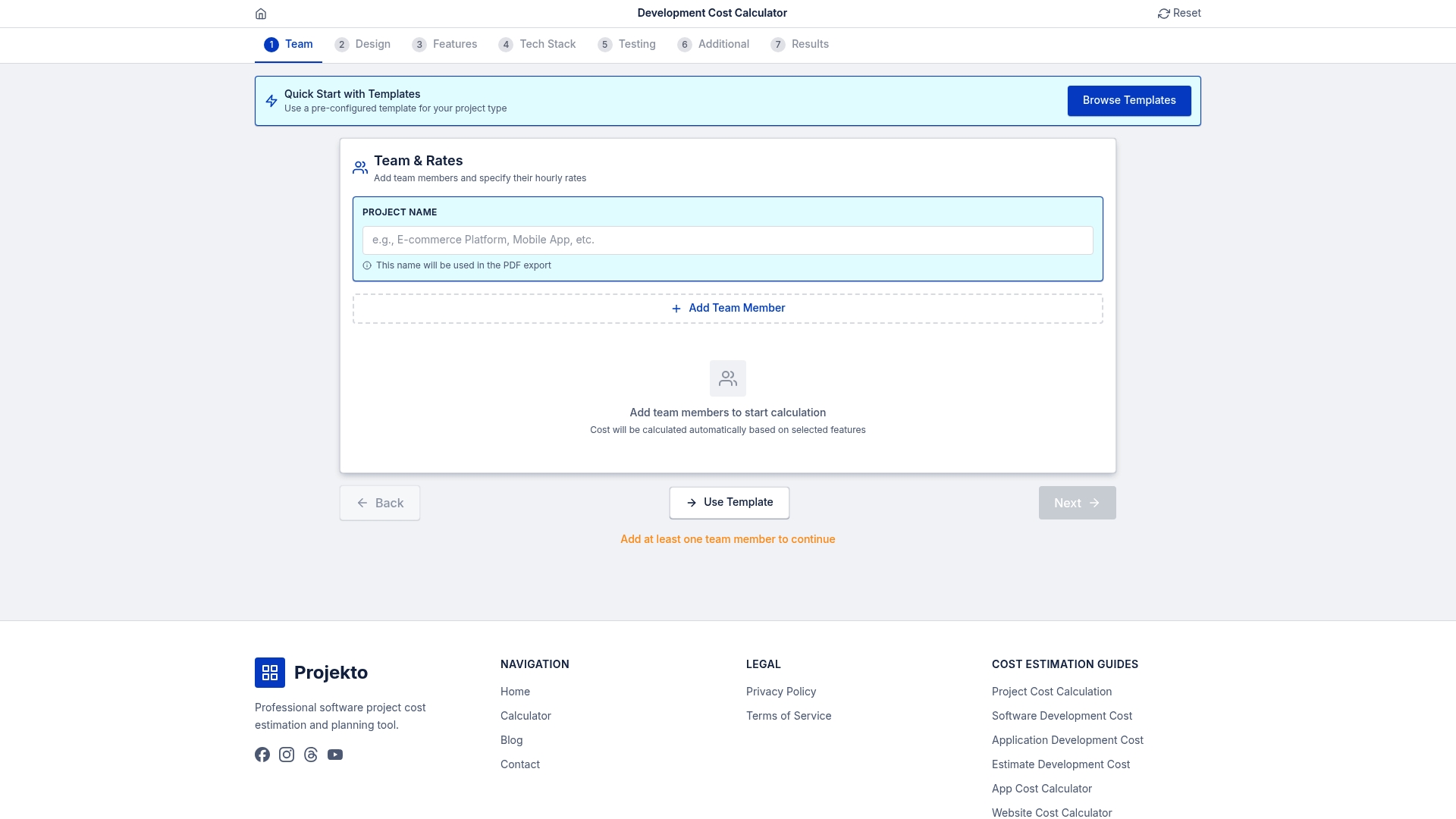

Estimate smarter: Tools for effortless project sizing

Ready to improve accuracy in your next estimate? Tap into these resources.

Running a structured sizing session is only part of the equation. Translating those estimates into a credible budget and timeline requires tools that reflect real development costs across different project types and team configurations.

The development cost calculator at Projecto provides a repeatable, transparent starting point for web and mobile app estimates, grounding your team's sizing output in current market data. For domain-specific projects, the event app cost estimator and the healthcare app calculator offer tailored benchmarks that account for compliance, integration complexity, and feature depth. These tools inform team discussions rather than replace them, giving stakeholders a data-backed reference before the first sprint is planned.

Frequently asked questions

What is the best method for sizing a software project?

The best method depends on project stage: T-shirt sizing or Planning Poker work well for early, uncertain phases, while PERT or bottom-up estimation suits detailed planning where requirements are stable.

How do I improve estimation accuracy in agile teams?

Keep tasks under five days, track velocity over sprints for at least three cycles, and run dedicated estimation retrospectives to calibrate the team's sizing patterns against actual delivery.

What should be included in the effort estimate besides coding?

A complete estimate must account for design at 10 to 15% of total effort, deployment and environment setup at approximately 5%, plus QA, documentation, and any integration or compliance work specific to the project.

How do you deal with estimation bias in project sizing?

Use historical data and team reviews to counteract individual bias, involve the full delivery team rather than relying on a single estimator, and recalibrate regularly through structured retrospectives tied to actual sprint outcomes.