Software project estimates fail so often that many teams simply accept budget overruns and missed deadlines as inevitable. Yet the problem isn’t just poor techniques or bad luck. Multiple hidden factors combine to derail even carefully planned estimates, from unclear requirements to cognitive biases that skew judgment. Understanding why estimation fails is the first step toward building more reliable budgets and timelines. This article reveals the key reasons software projects miss their marks and shows you how to address them systematically for better forecasting accuracy.

Table of Contents

-

Human Factors And Process Issues Affecting Estimation Accuracy

-

Practical Strategies To Improve Estimation Accuracy In Your Projects

Key takeaways

| Point | Details |

|---|---|

| Root causes | Vague requirements, optimistic bias, and scope creep consistently undermine estimation accuracy |

| Human factors | Cognitive biases and inexperience amplify estimation errors beyond technical complexity |

| Method selection | Different estimation techniques have distinct failure modes that vary by project context |

| Continuous improvement | Regular estimate updates and historical data review dramatically improve forecasting reliability |

| Tool support | Estimation calculators and structured frameworks reduce guesswork and standardize the process |

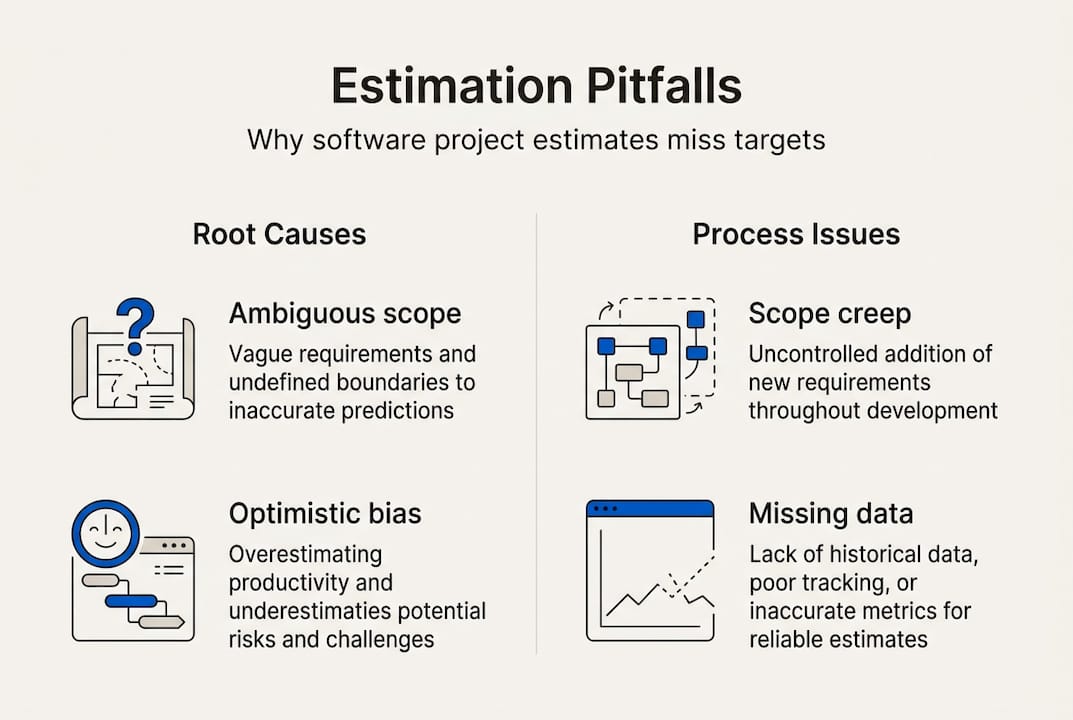

Common causes of estimation failure in software projects

Software estimation fails when foundational assumptions prove wrong or incomplete. Ambiguous requirements and unclear project scope consistently drive cost and time overruns because teams estimate against moving targets. When stakeholders describe features in vague terms or change their minds mid-project, developers must constantly recalibrate their work, making initial estimates obsolete.

Optimistic bias creates another persistent problem. Teams naturally underestimate complexity or overrate their own productivity, leading to rosy projections that reality quickly crushes. A developer might think a feature takes two days because they focus on the happy path, ignoring edge cases, testing, and integration work that triple the actual effort.

Scope creep adds unplanned features that push budgets and schedules far beyond original estimates. Stakeholders request “small changes” that seem trivial but cascade through multiple system components. Each addition compounds the estimation gap, yet teams rarely account for this inevitable drift when creating initial forecasts.

Miscommunication between stakeholders and developers produces incomplete or incorrect assumptions that poison estimates from the start. Business owners describe what they want in domain language, developers interpret through technical lenses, and the gap between these perspectives creates hidden work nobody estimated. By the time teams discover the mismatch, they’re already behind schedule.

Lack of historical data weakens estimation quality because teams have no baseline for comparison. Without records of past projects showing actual effort versus estimates, you’re guessing blindly rather than learning from experience. Organizations that ignore past lessons repeat the same estimation mistakes across multiple projects.

Pro Tip: Engage stakeholders early to clarify scope and document requirements with precision, using written acceptance criteria and visual mockups to eliminate ambiguity before estimation begins.

Human factors and process issues affecting estimation accuracy

Cognitive biases shape estimation in ways teams rarely recognize. The planning fallacy makes us believe our projects will proceed smoothly despite past evidence of complications. Anchoring bias locks teams onto initial numbers even when new information suggests different estimates. Optimism bias convinces us we’ll work faster than historical data proves possible. These mental shortcuts feel intuitive but systematically skew estimates toward unrealistic optimism.

Inexperienced teams lack the context and pattern recognition needed for accurate forecasting. Junior developers haven’t encountered enough edge cases to anticipate hidden complexity. New project managers don’t recognize warning signs that signal estimate trouble ahead. Without accumulated wisdom from past projects, teams estimate based on incomplete mental models that miss crucial factors.

Poor process discipline erodes estimation reliability through shortcuts and omissions. Teams skip detailed task breakdowns because they seem tedious, then discover work they never accounted for. Nobody reviews estimates critically before committing to them. Updates happen sporadically or not at all as projects evolve. Each process gap introduces error that compounds across the project lifecycle.

Failure to update estimates as projects progress creates growing discrepancies between plan and reality. Teams treat initial estimates as fixed commitments rather than living documents that should reflect current knowledge. When actual progress diverges from projections, nobody recalibrates the remaining work, leaving stakeholders surprised by eventual overruns.

Steps to reduce human factors impact:

-

Conduct bias awareness training so teams recognize their own estimation blind spots

-

Build and maintain historical databases tracking estimates versus actuals across projects

-

Use collaborative estimation techniques like planning poker to surface diverse perspectives

-

Schedule regular re-estimation sessions at project milestones to update forecasts

-

Implement process improvements that mandate detailed breakdowns and peer review

Estimation accuracy improves when teams acknowledge biases and leverage collective expertise rather than relying on individual judgment.

Pro Tip: Use planning poker or wideband Delphi techniques to minimize bias in team estimates by forcing discussion of assumptions and averaging multiple independent judgments.

Comparing estimation methods: strengths and pitfalls

Software teams use several estimation approaches, each with distinct advantages and failure modes. Expert judgment relies on experienced practitioners making informed guesses based on intuition and past projects. It’s fast and requires minimal documentation, making it popular for quick ballpark estimates. However, experts bring their own biases and blind spots, and their accuracy depends heavily on how similar the new project is to their experience base.

Analogy-based estimation compares the current project to past similar work, adjusting for differences in scope or complexity. This method grounds estimates in actual historical data rather than pure judgment. It fails when projects involve significant innovation or when past projects weren’t tracked accurately. Teams also struggle to identify truly comparable projects and properly account for differences.

Parametric models use mathematical formulas and predefined metrics to calculate estimates based on project characteristics. Function points, story points, and lines of code feed into equations that output time and cost projections. These models provide consistency and can process complex calculations quickly. They fail when underlying assumptions don’t match project reality or when input data is inaccurate. Garbage in, garbage out applies forcefully here.

Bottom-up estimation breaks projects into detailed tasks, estimates each component separately, then aggregates to a total. This granular approach surfaces hidden work and provides the most accurate results when done thoroughly. It’s also time-consuming and labor-intensive, requiring significant upfront investment. Teams often skip necessary detail to save time, undermining the method’s core advantage.

| Method | Strengths | Weaknesses | Failure Risk |

|---|---|---|---|

| Expert judgment | Fast, flexible, minimal documentation | Subjective bias, inconsistent, hard to justify | High for novel projects |

| Analogy-based | Grounded in reality, intuitive | Requires good historical data, struggles with innovation | Medium to high |

| Parametric | Consistent, handles complexity, scalable | Needs accurate inputs, rigid assumptions | Medium |

| Bottom-up | Most accurate, surfaces hidden work | Time-consuming, requires discipline | Low if done thoroughly |

Different estimation methods vary in accuracy and suitability depending on project type and available data, so matching method to context matters enormously.

Criteria for selecting the best estimation method:

-

Project novelty: use bottom-up for innovative work, analogy-based for familiar domains

-

Time available: expert judgment for quick estimates, detailed methods when time permits

-

Data quality: parametric models need good inputs, analogy-based needs reliable history

-

Stakeholder needs: some audiences demand detailed justification, others accept ranges

-

Team capability: complex methods require training and discipline to execute properly

Practical strategies to improve estimation accuracy in your projects

Iterative estimation with regular checkpoints lets you update numbers based on actual progress rather than sticking with outdated initial guesses. Set milestones every two to four weeks where teams review what’s complete, what remains, and whether original estimates still hold. This continuous recalibration catches problems early when you can still adjust scope or resources to stay on track.

Engage cross-functional teams for diverse perspectives that surface assumptions one discipline might miss. Developers see technical complexity, designers understand user experience effort, QA engineers anticipate testing needs. When all voices contribute to estimates, you get more complete pictures that account for work across the entire delivery pipeline.

Document estimation assumptions and risks clearly so stakeholders understand what could change and why. Write down what you’re assuming about requirements stability, team availability, technical feasibility, and external dependencies. Flag risks that could inflate estimates if they materialize. This transparency manages expectations and provides context when estimates need revision.

Incorporate buffer time for uncertainty and potential scope changes rather than presenting best-case scenarios as commitments. Add contingency percentages based on project risk: 10 to 20 percent for familiar work, 30 to 50 percent for innovative projects. Buffers aren’t padding or waste, they’re realistic acknowledgment that software projects encounter unexpected complications.

Leverage estimation tools and historical data to ground forecasts in reality rather than pure judgment. Regular estimate revisions and estimation software improve budget and timeline control by standardizing calculations and surfacing patterns from past projects. Tools eliminate arithmetic errors and apply consistent methodologies across estimates.

Top practices to implement immediately:

-

Break work into small, estimable chunks of one to three days maximum

-

Track actual effort against estimates to build organizational learning

-

Involve the people doing the work in creating estimates

-

Use ranges rather than single-point estimates to communicate uncertainty

-

Review and update estimates at every sprint planning or project milestone

-

Create estimation checklists covering common overlooked tasks

-

Build in time for requirements clarification and rework

Pro Tip: Regularly review past project estimates against actuals to refine future estimations, identifying patterns in where your team consistently over or underestimates specific types of work.

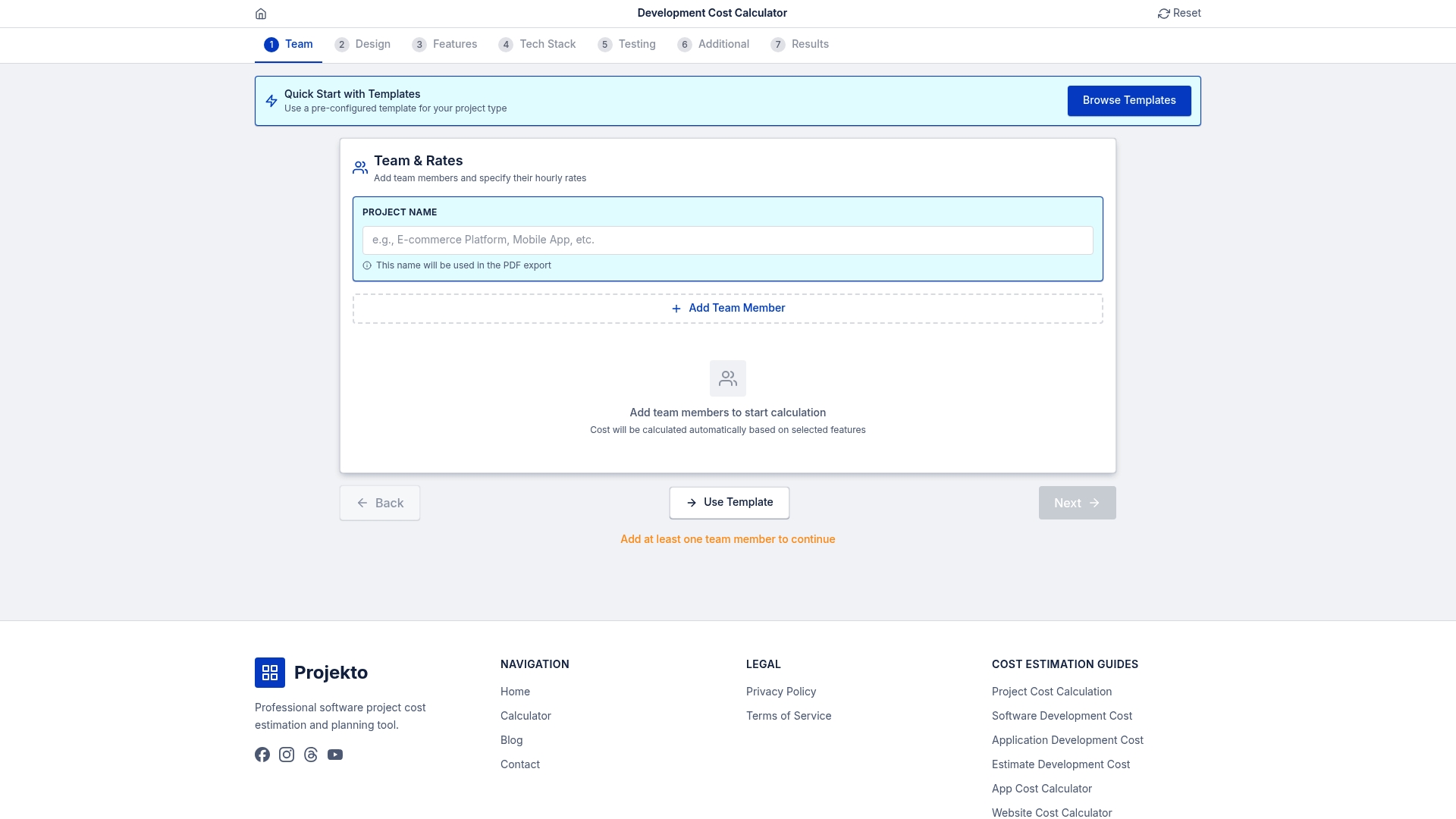

Streamline your software estimates with EstimateCalc

Applying these estimation strategies takes discipline and time that busy project managers often lack. EstimateCalc offers an easy-to-use development cost calculator tailored specifically for software projects, incorporating industry benchmarks and historical data to ground your estimates in reality. The tool supports various project types from AI applications to project management software, helping you quickly generate reliable forecasts.

EstimateCalc reduces guesswork by walking you through structured questions about scope, complexity, and requirements, then applying proven formulas to calculate time and budget ranges. You save hours compared to manual estimation while getting more accurate results backed by data from thousands of completed projects. The calculator becomes your estimation partner, catching factors you might overlook and providing confidence when presenting forecasts to stakeholders.

Frequently asked questions

Why do many projects go over budget despite careful planning?

Even careful planning fails when it’s based on incomplete information or optimistic assumptions that don’t survive contact with reality. Requirements evolve as stakeholders see working software and realize what they actually need. Hidden technical complexity emerges during implementation that nobody anticipated during planning. Teams also systematically underestimate integration work, testing effort, and time lost to meetings and interruptions.

How can I handle scope changes during a project estimation?

Build scope change processes into your initial estimate by including buffer time and establishing formal change request procedures. When stakeholders request additions, document the impact on timeline and budget, then negotiate what existing features to cut or which deadlines to extend. Never absorb scope changes silently, always make the tradeoffs visible and get explicit approval for revised estimates.

What are the most reliable estimation techniques for uncertain projects?

For high-uncertainty projects, combine multiple techniques to triangulate more reliable ranges. Use expert judgment for quick baseline, then validate with analogy to similar past work if available. Apply bottom-up estimation to known components while adding larger buffers to uncertain areas. Consider timeboxing uncertain features with fixed effort budgets rather than trying to estimate unknowable complexity precisely.

Can estimation tools replace expert judgment?

Estimation tools augment rather than replace expert judgment by providing structure, consistency, and data-driven baselines. Tools excel at calculations, pattern matching against historical projects, and surfacing factors humans forget. Experts still need to interpret tool outputs, adjust for project-specific context, and make final decisions about estimates. The combination of tool rigor and human insight produces better results than either alone.

How often should estimates be updated during a project?

Update estimates at every significant milestone or sprint boundary, typically every two to four weeks depending on project duration. Also recalibrate whenever major scope changes occur, technical risks materialize, or actual progress significantly diverges from plan. Treat estimates as living documents that reflect current knowledge rather than fixed commitments locked at project start.