TL;DR:

- Most software projects surpass estimates due to common cognitive biases and flawed models.

- Accurate estimation requires understanding effort versus duration, historical data, and organizational culture.

- Implementing structured tools, breaking projects into phases, and fostering a culture of honesty improve accuracy.

80% of software projects exceed their original estimates by 50% or more, yet most teams walk into new builds with the same flawed planning habits. For project managers and business owners, that gap between estimate and reality translates directly into budget overruns, missed deadlines, and strained client relationships. The good news is that the most damaging estimation mistakes follow predictable patterns. Understanding those patterns, and knowing how to counter them, is the difference between a project that delivers on time and one that quietly drains resources for months.

Table of Contents

- The top 5 software estimation mistakes

- Cognitive traps: Planning fallacy, optimism, and anchoring

- Mistaking effort for duration and other model errors

- Ignoring historical data and skipping task breakdowns

- Not accounting for hidden costs, risks, and buffer

- A fresh take: The underestimated role of humility in accurate software estimation

- Estimate smarter with the right tools

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Biases skew estimates | Planning fallacy and optimism bias commonly cause underestimating that leads to overruns. |

| Effort isn’t duration | Mistaking person-hours for real timelines causes timeline slips and budget surprises. |

| Break down tasks | Granular breakdowns and use of project history dramatically improve estimation accuracy. |

| Plan for the unknown | Buffers and risk planning protect budgets and timelines from predictable surprises. |

| Humility matters | Admitting what you don’t know leads to better estimation and healthier team culture. |

The top 5 software estimation mistakes

With the problem set, let's outline the most common estimation mistakes to watch for. Most teams assume their overruns are unique to their project, but public estimation datasets reveal that overestimates and underestimates cluster around the same root causes across industries and team sizes. The variance is far higher at the project level than at the individual task level, which tells us that the problem is usually structural, not technical.

When you estimate software development cost without addressing these five core mistakes, you are building on an unstable foundation:

- Falling for cognitive biases such as the planning fallacy, optimism bias, and anchoring, which skew estimates toward best-case outcomes

- Confusing effort with duration, treating person-hours as if they map directly to calendar time

- Using uncalibrated estimation models that produce outputs far removed from your team's actual velocity

- Ignoring historical data and skipping granular task breakdowns in favor of high-level guesses

- Failing to account for hidden variables, including scope creep, technical debt, and resource gaps, without building any buffer

Each of these mistakes compounds the others. A team anchored to an early low estimate will resist adding buffer, ignore historical overruns, and underestimate task complexity all at once. Addressing them individually is useful, but addressing them as a connected system is what produces reliable estimates.

Cognitive traps: Planning fallacy, optimism, and anchoring

Let's start by exploring how hidden mental shortcuts can sabotage your estimates. Cognitive biases including the planning fallacy, optimism bias, anchoring, and the Dunning-Kruger effect are among the most well-documented causes of estimation failure in software projects.

The planning fallacy causes teams to focus on the ideal scenario, the one where no one gets sick, requirements stay stable, and integrations work on the first attempt. In practice, none of those conditions hold consistently. Teams systematically underestimate how often the non-ideal scenario is actually the norm.

Optimism bias compounds this by making teams downplay risks they have not yet encountered. Junior developers in particular tend to underestimate tasks they have not done before, while senior developers often underestimate tasks they consider routine.

Anchoring is subtler but equally damaging. When a stakeholder floats an early budget number, or a developer makes an offhand estimate in a kickoff meeting, that number becomes a psychological anchor. Later, more rigorous estimates get pulled toward that anchor even when the data does not support it.

"Unconscious bias can add weeks of avoidable delay to any software project, regardless of team experience or methodology."

Practical counters include:

- Three-point estimation (PERT): Calculate a weighted average of optimistic, most likely, and pessimistic scenarios. This forces the team to articulate risk explicitly.

- Blind estimation rounds: Have team members submit estimates independently before any group discussion to prevent anchoring.

- Peer cross-checks: Use estimation consensus techniques to surface disagreements early.

Pro Tip: Before finalizing any estimate, run it through a time estimate validation process to catch optimism bias before it becomes a contractual commitment.

Mistaking effort for duration and other model errors

Cognitive traps are just the start. Technical misunderstandings hurt just as much. One of the most persistent errors in software estimation is treating effort, measured in person-hours, as equivalent to duration, measured in calendar days.

If a task requires 40 hours of effort and you assign one developer, it takes one week assuming full availability. But developers rarely operate at 100% capacity. Meetings, code reviews, context switching, and administrative work typically consume 30 to 40% of a developer's week. That 40-hour task now takes closer to 10 to 12 calendar days.

Algorithmic models like COCOMO require careful calibration to your team's specific context. Without calibration, they can produce errors by a factor of two in either direction, meaning a 3-month project could be estimated at 6 weeks or 6 months depending on the input assumptions.

Here is a structured approach to separating effort from duration:

- Estimate raw effort in hours per task, using three-point estimation

- Apply an availability factor (typically 60 to 70% for most development teams)

- Account for dependencies that force sequential rather than parallel work

- Add calendar overhead for holidays, onboarding, and sprint ceremonies

Pro Tip: Always ask whether your estimate accounts for holidays, recurring meetings, and multitasking. If it does not, your duration figure is almost certainly too short.

The table below illustrates the gap between estimated and actual durations across common project types:

| Project type | Estimated duration | Typical actual duration | Variance |

|---|---|---|---|

| MVP mobile app | 3 months | 4.5 to 5 months | +50 to 67% |

| API integration | 2 weeks | 3 to 4 weeks | +50 to 100% |

| Dashboard feature | 1 week | 10 to 14 days | +40 to 100% |

| Full platform rebuild | 6 months | 9 to 12 months | +50 to 100% |

Consulting a software sizing guide before locking in timelines can help you apply the right sizing metrics to your specific project type.

Ignoring historical data and skipping task breakdowns

Technical improvements depend on strategic process changes, most notably the use of past data and smart breakdowns. Teams that rely on gut feel or high-level analogies without consulting their own historical performance data are ignoring the most reliable signal available to them.

Historical data provides a reality check. If your last three API integrations each took twice as long as estimated, that pattern is far more informative than any theoretical model. Using historical data alongside buffers of 10 to 20%, combined with bottom-up work breakdown structures and planning poker, consistently produces more accurate estimates than top-down guessing.

Key ways to structure and leverage past data:

- Maintain a project log that records estimated versus actual hours for every completed task

- Segment by task type (frontend, backend, QA, DevOps) to identify where your team consistently over or underestimates

- Use velocity data from Agile sprints to calibrate story point estimates against real delivery rates

- Run retrospectives that explicitly compare estimates to actuals and document the reasons for variance

The comparison below shows why bottom-up estimation consistently outperforms high-level approaches:

| Estimation approach | Accuracy | Effort required | Risk exposure |

|---|---|---|---|

| High-level analogy | Low | Minimal | High |

| Top-down parametric | Medium | Low | Medium |

| Bottom-up WBS | High | Significant | Low |

| Planning poker (Agile) | High | Medium | Low |

Running structured estimation workshops with your team is one of the most effective ways to combine historical data with collaborative task breakdown. Pairing this with a clear estimation scope guide ensures that no part of the project is left unaccounted for before work begins.

Not accounting for hidden costs, risks, and buffer

Beyond known tasks, smart teams plan for the unknown. The majority of budget overruns are not caused by poor execution on planned work. They are caused by scope changes, technical debt that surfaces mid-project, resourcing gaps, and integration surprises that no one budgeted for.

Small projects succeed 90% of the time, while large projects succeed less than 10% of the time. One of the most actionable responses to this data is to divide large builds into smaller, independently deliverable phases, each with its own estimate and buffer.

Here is a practical process for building a robust contingency buffer:

- Complete your bottom-up estimate before adding any buffer

- Categorize risks by likelihood and impact, using a simple 3x3 risk matrix

- Add 10% buffer for well-understood projects with stable requirements

- Add 15 to 20% buffer for projects with novel technology, unclear requirements, or new team members

- Review and re-estimate at each sprint boundary or project phase gate

Pro Tip: Agile methodologies produce 39% project success rates compared to 11% for traditional waterfall approaches, largely because they build re-estimation into the process by default.

Hidden costs that teams most often miss include third-party API licensing fees, security audit requirements, App Store review cycles, accessibility compliance work, and the cost of integration effort estimation for external systems.

"Divide big builds into smaller phases. Small projects succeed nine times more often than large ones, and the estimation accuracy improves with every completed phase."

A fresh take: The underestimated role of humility in accurate software estimation

Now that we have covered tactical mistakes, it is worth stepping back to consider what is really behind accurate estimation. Most guidance focuses on process: use better models, break tasks down further, add a buffer. That advice is correct, but it addresses the symptom rather than the cause.

The root cause of most estimation failures is not a missing spreadsheet or the wrong methodology. It is an organizational culture that treats a changed estimate as a sign of incompetence rather than improved insight. When teams feel pressure to defend their original numbers, they stop updating them honestly. That rigidity is where projects go off the rails.

The project estimator mindset that produces the most reliable results is one built on intellectual honesty. Teams that openly acknowledge uncertainty, communicate risks early, and treat revised estimates as evidence of good judgment rather than failure consistently outperform teams with more sophisticated tools but less psychological safety.

The contrarian view here is that estimation failure is primarily a failure of organizational psychology, not process. Fixing the process without fixing the culture produces marginally better numbers but does not solve the underlying problem. Build a team environment where saying "I was wrong, here is the updated estimate" is rewarded, and your estimation accuracy will improve faster than any methodology change can deliver.

Estimate smarter with the right tools

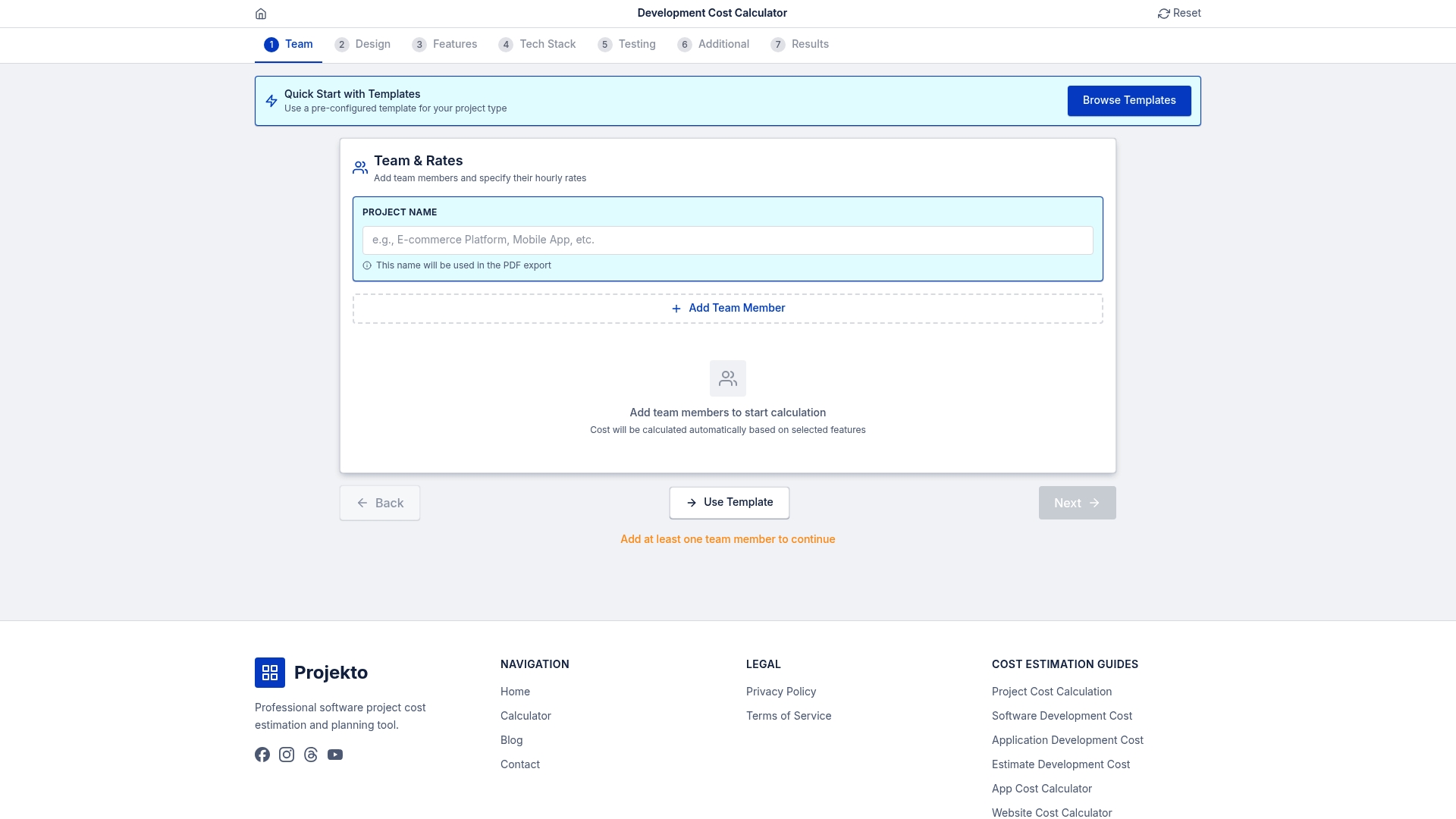

Ready to move from learning to action? Structured estimation tools remove personal bias from the equation and make it straightforward to model multiple scenarios, apply buffers, and stress-test your assumptions before committing to a timeline or budget.

The development cost calculator at Projecto gives you an instant, structured baseline for web and mobile app projects. If you are building in a specific vertical, the event app cost calculator and the healthcare app cost calculator provide domain-specific estimates that account for the compliance, integration, and feature complexity typical of those sectors. Start with a structured estimate and adjust from there rather than working backward from a number someone said in a meeting.

Frequently asked questions

What is the most common software estimation mistake?

The most common mistake is underestimating due to optimism bias and the planning fallacy, which cause teams to anchor on best-case scenarios and ignore the frequency of real-world complications.

How do expert-based and algorithmic estimates fail?

Expert judgment is prone to bias, while algorithmic models like COCOMO require calibration to your team's specific context or they can be off by a factor of two in either direction.

How much buffer should I add to my software estimates?

Adding 10 to 20% contingency buffer is the standard recommendation, with the higher end applying to projects involving new technology, unclear requirements, or unfamiliar team configurations.

Does project size affect estimation accuracy?

Yes. Small projects succeed 90% of the time while large projects succeed less than 10% of the time, making project decomposition one of the most effective risk-reduction strategies available.

Can software estimation mistakes be prevented?

Many are preventable by combining historical data with granular breakdowns, regular re-estimation at phase gates, and a team culture that treats revised estimates as a sign of good judgment rather than poor planning.