TL;DR:

- Effective estimates are range-based, reflecting real uncertainty and improving decision-making.

- Data-driven models and historical information enhance accuracy and objectivity in project estimation.

- Structured frameworks and team collaboration reduce bias, increase transparency, and improve estimation reliability.

Project overruns are not random events. 80% of software projects exceed their initial estimates by 50%, and the root cause is almost always poor estimation practice. For project managers and business stakeholders, inaccurate estimates erode budgets, shatter delivery timelines, and damage trust with clients and leadership alike. Understanding what separates a reliable estimate from a risky guess is not optional. This article breaks down seven essential qualities that define effective estimation, giving you a practical framework to improve budget accuracy and schedule confidence on your next web or mobile app project.

Table of Contents

- Realism: Anchoring estimates in ranges, not single points

- Data-driven approach: Leveraging history and models

- Structured methodology: Reducing bias through frameworks

- Risk awareness: Factoring in buffers, scope, and unknowns

- Collaboration and transparency: Involving the right experts

- A pragmatic perspective: Why hybrid and iterative estimation wins out

- Take estimation further with the right tools

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Use estimate ranges | Range-based estimates build trust and account for real project uncertainty. |

| Base on data and models | Estimates backed by historical data and parametric models are consistently more accurate. |

| Apply structured frameworks | Using proven estimation methods helps reduce bias and ensures repeatability. |

| Factor in all risks | Effective estimates always include buffers for unknowns, dependencies, and non-coding tasks. |

| Collaborate for consensus | Involving teams and SMEs improves accuracy and alignment across stakeholders. |

Realism: Anchoring estimates in ranges, not single points

The most common estimation mistake is treating a project like a known quantity when it is not. Single-point estimates, where a team commits to one fixed number, create a false sense of certainty. When reality diverges from that number, trust collapses and scope disputes follow. Range-based estimates are the professional standard because they acknowledge what is genuinely unknown at the time of planning.

Effective estimates provide ranges, with accuracy improving from ±50% at early stages to ±10-15% at detailed planning levels. This progression is not a weakness. It is a feature that reflects how knowledge accumulates over a project lifecycle. Early estimates serve feasibility decisions. Later estimates serve contracting and resourcing.

PMI recommends progressive elaboration, starting with Rough Order of Magnitude ranges for feasibility and refining as more project details emerge. This approach prevents teams from locking into premature commitments before requirements are stable.

Key benefits of range-based estimation:

- Reduces over-optimism by surfacing best-case and worst-case scenarios

- Gives stakeholders a realistic decision-making window

- Protects the team from accountability for unknowns outside their control

- Builds credibility by demonstrating analytical rigor

Avoiding common estimation pitfalls starts with accepting that no estimate is a guarantee. Understanding the role of scope clarity in narrowing those ranges is equally important.

Pro Tip: Always present estimates as a range with a stated confidence level. A statement like "we estimate 14 to 18 weeks at 80% confidence" is far more defensible than a single deadline.

Data-driven approach: Leveraging history and models

Beyond realism, effective estimates also excel when they are rooted in evidence. Intuition and gut feel have a role in estimation, but they should never be the primary input. Historical project data, parametric models, and industry benchmarks transform estimation from guesswork into a repeatable, auditable process.

Parametric models like COCOMO II and reference class forecasting improve objectivity and accuracy by applying mathematical relationships between project size, complexity, and effort. These models are not perfect, but they introduce structure that reduces individual bias. COCOMO II is accurate within 20% for 70% of organic projects, which represents a significant improvement over unstructured expert guesses.

| Method | Data required | Accuracy range | Best for |

|---|---|---|---|

| Analogous estimation | Past project records | ±30-50% | Early-stage feasibility |

| Parametric (COCOMO II) | Size metrics, cost drivers | ±20% | Mid-stage planning |

| Reference class forecasting | Industry benchmarks | ±15-25% | Portfolio-level decisions |

| Bottom-up estimation | Full WBS breakdown | ±10-15% | Detailed project planning |

Blending quantitative methods with expert insight produces the best outcomes. A model may flag that a feature set is larger than expected, while an experienced developer can confirm whether that flag reflects real complexity. Learning how to estimate software costs accurately means treating models as inputs, not final answers.

Pro Tip: Maintain a project history log that captures original estimates, final actuals, and the reasons for variance. This data becomes your most valuable estimation asset over time. Tools that support estimators and app budgets can help structure this process.

Structured methodology: Reducing bias through frameworks

Once grounded in data, estimates are transformed further by the right frameworks. Cognitive biases, particularly over-optimism and anchoring, consistently distort estimates when teams rely on informal processes. Structured methodologies counteract these biases by standardizing how effort is broken down and reviewed.

Four frameworks dominate professional software estimation:

- Bottom-up WBS estimation: Decomposes the project into individual tasks, estimates each one, and aggregates upward. Highly accurate but time-intensive.

- Three-point PERT estimation: Uses optimistic, pessimistic, and most-likely values to calculate a weighted average. Effective for managing uncertainty.

- Planning Poker: A consensus-based agile technique where team members independently assign story points, then discuss discrepancies. Reduces anchoring bias.

- Wideband Delphi: Iterative expert consensus method where estimates are refined through structured rounds of discussion and revision.

Structured frameworks like bottom-up WBS and three-point PERT deliver measurably higher accuracy than ad hoc methods, particularly for web and mobile app projects where feature complexity varies significantly.

| Framework | Bias reduction | Time to apply | Ideal use case |

|---|---|---|---|

| Bottom-up WBS | High | High | Detailed sprint planning |

| Three-point PERT | Medium | Medium | Risk-sensitive milestones |

| Planning Poker | High | Low | Agile backlog refinement |

| Wideband Delphi | Very high | Medium | Complex or novel projects |

"The best estimation framework is the one your team will actually use consistently. Consistency beats perfection in estimation methodology."

Exploring team-based estimation and understanding the steps for accurate estimation will help you select and implement the right approach.

Risk awareness: Factoring in buffers, scope, and unknowns

Even the most methodical estimates fail if they ignore risk. Ambiguous requirements, late-stage scope changes, third-party dependencies, and integration complexity are among the most common sources of estimation error in web and mobile projects. Effective estimates treat risk as a first-class input, not an afterthought.

Buffers of 10-25% and explicit inclusion of non-coding work such as design, testing, and project management lead to substantially more accurate estimates. Many teams underestimate because they only account for feature development hours, ignoring the full effort required to deliver a production-ready application.

Risk-aware estimates address the following:

- Ambiguous requirements: Flag unclear specifications and assign higher uncertainty ranges to those features

- Scope creep: Build change control assumptions into the estimate documentation

- Third-party dependencies: Identify APIs, vendors, or integrations that could introduce delays

- Non-development effort: Include design, QA, DevOps, and project management as explicit line items

- Known unknowns: Reserve contingency budget for issues that are predictable in category but not in specifics

Understanding software sizing best practices provides a strong foundation for identifying where risk is concentrated. Regularly reviewing estimation reliability against actuals also helps teams calibrate their buffers over time.

Pro Tip: Separate your contingency reserve from your base estimate in all stakeholder-facing documents. This makes risk visible and gives you a defensible basis for drawing on reserves when issues arise.

Collaboration and transparency: Involving the right experts

With technical rigor in place, lasting success depends on how teams collaborate and communicate their estimates. Estimates produced in isolation, whether by a single architect or a project manager working alone, are systematically less accurate than those produced through structured team involvement. The reason is straightforward: no single person holds all the knowledge needed to estimate a complex software project.

Wideband Delphi and subject matter experts increase accuracy by 18% versus closed-door estimation. This improvement comes from surfacing blind spots, challenging assumptions, and incorporating domain-specific knowledge that generalists may miss.

Key practices for collaborative, transparent estimation:

- Document all assumptions: Every estimate should include a written list of the assumptions it depends on

- Involve developers early: Engineers who will execute the work provide the most grounded effort estimates

- Align with stakeholders before finalizing: Shared understanding of scope prevents misaligned expectations at delivery

- Version-control your estimates: Track how estimates evolve as requirements change, and communicate those changes explicitly

- Use structured review sessions: Walk stakeholders through the estimate line by line to surface disagreements early

"Transparency in estimation is not just a communication best practice. It is a risk management strategy that reduces rework, disputes, and costly late-stage surprises."

Building a culture of achieving estimation consensus across your team is one of the highest-leverage investments a project manager can make.

A pragmatic perspective: Why hybrid and iterative estimation wins out

Having outlined what makes an estimate effective, here is a perspective grounded in real project experience: no single estimation method is universally superior, and teams that treat estimation as a one-time event rather than an ongoing process will consistently underperform.

Hybrid approaches are optimal for web and mobile projects when combining algorithmic data with expert judgment. Algorithmic models provide objectivity and consistency. Expert judgment provides context, pattern recognition, and the ability to flag when a model's assumptions do not fit the current situation. Neither alone is sufficient.

The teams that estimate most accurately are those that treat each completed project as a calibration opportunity. They capture variance data, analyze root causes, and update their estimation models accordingly. This iterative feedback loop is what separates organizations that consistently deliver on time and on budget from those that perpetually struggle with overruns.

Avoiding software estimation mistakes is less about finding the perfect method and more about building a disciplined, learning-oriented estimation culture. The goal is not a perfect first estimate. The goal is an organization that gets measurably better at estimation with every project it completes.

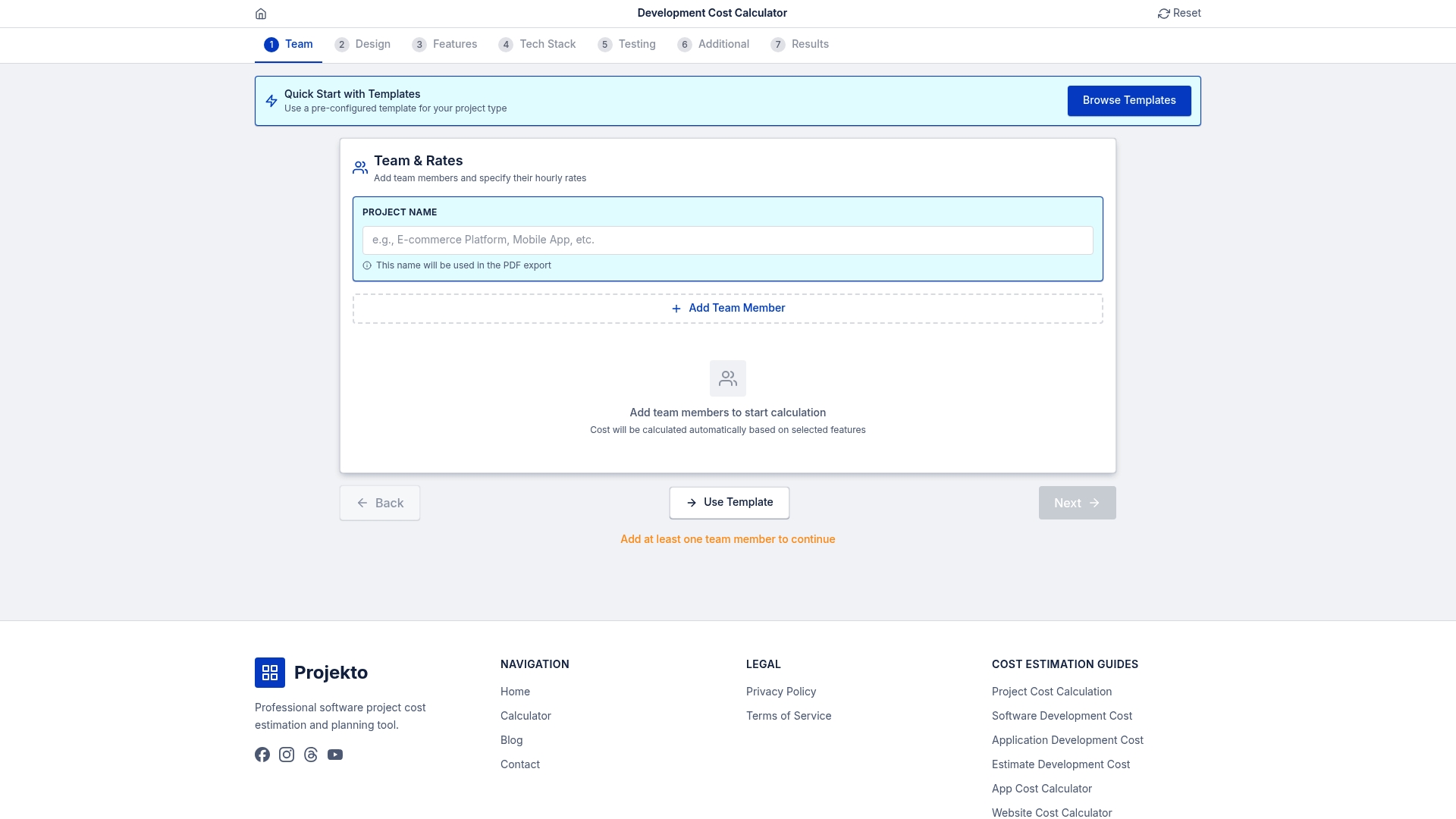

Take estimation further with the right tools

Applying these seven qualities consistently requires more than process knowledge. It requires tools that support structured input, transparent documentation, and rapid iteration across project scenarios.

The Development Cost Calculator from Projecto gives project managers and stakeholders a structured way to generate range-based, data-informed estimates for web and mobile app projects in minutes. Whether you are scoping a new platform or refining an existing budget, Explore EstimateCalc to see how the tool supports every quality covered in this article. You can even generate a targeted event app estimate to see the methodology in action before committing to a full project scope.

Frequently asked questions

What is a range-based estimate and why is it better than a single-point estimate?

A range-based estimate provides upper and lower bounds that reflect real uncertainty, making it more accurate and credible than a single fixed number that ignores unknowns. Stakeholders can make better-informed decisions when they understand the full range of possible outcomes.

How much buffer should be included in software project estimates?

Buffers of 10-25% are recommended to account for risks, unknowns, and scope changes, with the exact percentage depending on project complexity and requirements stability. Higher ambiguity warrants a larger buffer.

Which estimation frameworks are most accurate for web and mobile app projects?

Bottom-up and three-point estimation are considered the most accurate for detailed software planning, with bottom-up WBS delivering ±10-15% accuracy when requirements are well-defined. Agile teams often complement these with Planning Poker for sprint-level granularity.

How does team collaboration improve estimation accuracy?

Including subject matter experts and using consensus methods like Wideband Delphi increases accuracy by 18% compared to closed-door estimation, primarily by surfacing blind spots and reducing individual cognitive bias. Structured review sessions further align stakeholder expectations before work begins.

What role do historical data and models play in estimation?

COCOMO II models improve estimation to within 20% accuracy for most organic projects by applying empirical relationships between project size and effort. Historical data from past projects provides the benchmarks that make these models reliable in practice.