TL;DR:

- Flawed estimation processes are the main cause of software project overruns and failures.

- Proper preparation, structured review, and continuous improvement are essential for accurate estimates.

- Using hybrid estimation methods and the right tools enhances confidence and reduces risks.

Software projects fail at a striking rate, with only 34% of organizations consistently producing accurate estimates, and the majority of projects overrunning budgets and timelines by 50% or more. These aren't random failures. They trace back to flawed estimation processes that go unchallenged until it's too late to course-correct. For business decision-makers and project managers, a structured estimation review isn't optional, it's the mechanism that separates predictable delivery from costly surprises. This guide covers what to prepare before reviewing an estimation, a step-by-step review process, the most common pitfalls to watch for, and how to verify accuracy and build continuous improvement into your workflow.

Table of Contents

- What you need before reviewing project estimations

- Step-by-step process to review a project estimation

- Spotting and troubleshooting common estimation pitfalls

- Verifying estimation accuracy and ensuring continuous improvement

- A pragmatic take: Iteration beats perfection

- Estimate with confidence using the right tools

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Preparation matters most | Having clear requirements and historic data is essential for an effective estimation review. |

| Review is a process | Systematic steps, including risk identification and recalibration, increase estimate reliability. |

| Common pitfalls | Biases and missing buffers are key causes of inaccurate estimates and overruns. |

| Iterate for accuracy | Update estimates as scope clarifies and use feedback loops for continuous improvement. |

What you need before reviewing project estimations

Before you can meaningfully evaluate an estimation, you need the right inputs in place. Reviewing a number without context produces false confidence. The following checklist covers the minimum information required to conduct a credible estimation review.

Prerequisites checklist:

- Fully documented project requirements (functional and non-functional)

- Historical data from comparable past projects

- Clearly defined estimation scope, including what is and isn't included

- Stakeholder alignment on priorities, constraints, and acceptable risk levels

- Team capacity assumptions, including planned leave and parallel commitments

- A breakdown of both coding and non-coding efforts

Understanding why estimates fail often comes down to missing one or more of these inputs before the review even begins.

| Information needed | Purpose | Suggested source |

|---|---|---|

| Requirements documentation | Defines scope boundaries | Product owner, BA team |

| Historical project data | Provides calibration baseline | Project management system |

| Team velocity/capacity | Grounds effort in reality | Sprint records, HR data |

| Risk register | Surfaces unknowns early | Risk management process |

| Estimation method used | Enables technique critique | Estimation lead |

For edge cases involving novel technology or unclear requirements, Wideband Delphi or reference class forecasting are the appropriate methods. Scope creep scenarios require a formal change review process rather than ad hoc adjustments. One distinction that frequently gets blurred is effort versus duration. Effort measures total person-hours required. Duration measures calendar time. Failing to account for team capacity at roughly 85% utilization (accounting for meetings, reviews, and interruptions) routinely causes estimates to miss their targets.

Non-coding work is the most underestimated category in software projects. Testing, code reviews, documentation, deployment, and stakeholder communication can account for 30 to 40% of total project effort. A thorough review of estimation scope must include these activities explicitly.

Pro Tip: The fastest red flags in any estimation are the absence of a contingency buffer and zero line items for non-coding work. If you see either, treat the entire estimate as incomplete until those gaps are addressed.

Step-by-step process to review a project estimation

With all prerequisites in hand, here's how to systematically review a project estimation without missing critical gaps.

- Verify requirements coverage. Confirm that every functional and non-functional requirement maps to an estimation line item. Gaps here directly become scope creep later.

- Analyze the estimation method used. Identify whether the team used algorithmic, expert judgment, bottom-up, top-down, or a hybrid approach, and assess whether that method fits the project's complexity.

- Check resource allocation assumptions. Validate that team size, skill levels, and availability align with what the estimation assumes. Mismatched assumptions are a leading source of overruns.

- Conduct a risk assessment overlay. Map identified risks to specific estimation line items. Unquantified risks should trigger a buffer addition or a formal risk response plan.

- Run stakeholder validation. Present the estimate to key stakeholders for a structured review. Surface disagreements early rather than discovering them mid-project.

- Recalibrate where needed. If any step reveals gaps, adjust the estimate formally and document the rationale. Undocumented changes create accountability problems later.

Choosing the right technique matters significantly for estimation consensus across the team. The table below compares the main approaches.

| Technique | Pros | Cons |

|---|---|---|

| Algorithmic (COCOMO II) | Defensible, repeatable | Requires calibration data |

| Expert judgment | Fast, contextual | Prone to optimism bias |

| Bottom-up | High accuracy (+10% vs top-down) | Time-intensive |

| Top-down | Quick for early-stage scoping | Less precise |

| Hybrid | Balances speed and accuracy | Requires skilled facilitation |

Algorithmic methods like COCOMO II are defensible but require proper calibration against your organization's historical data. Expert judgment is faster but consistently bias-prone without structured facilitation. Bottom-up estimation, where individual tasks are estimated and rolled up, delivers measurably better accuracy. Understanding software sizing is a prerequisite for effective bottom-up work.

Pro Tip: Hybrid approaches that combine algorithmic baselines with expert review offer the best balance of defensibility and practical accuracy. They also make it easier to explain your numbers to non-technical stakeholders.

Spotting and troubleshooting common estimation pitfalls

Knowing the review process is half the battle, but recognizing pitfalls is what separates a functional review from a checkbox exercise.

Most common estimation pitfalls:

- Optimism bias: Teams consistently underestimate task complexity, especially for unfamiliar technologies.

- Anchoring: Early figures, even rough ones, anchor subsequent estimates and distort final numbers.

- Ignoring technical debt: Existing code quality issues add friction that estimates rarely capture.

- Missing integration effort: Third-party APIs, legacy systems, and cross-team dependencies are routinely underestimated. Learn more about how to estimate integration effort to avoid this gap.

- Omitting non-coding tasks: Testing, documentation, and deployment are frequently left out entirely.

- No formal buffer: Contingency is treated as a sign of weakness rather than a professional standard.

"80% of software projects overrun their original time estimates by 50% or more, and teams typically underestimate effort by 25 to 40%. These aren't edge cases. They are the statistical norm."

Underestimation carries more risk than overestimation in most business contexts. An overestimate creates negotiation room and may cost a deal. An underestimate creates delivery failure, erodes client trust, and generates cost overruns that damage margins and relationships simultaneously. Reviewing costly estimation mistakes shows that the pattern repeats across industries and project types.

Buffer gaps are identifiable during review by checking whether the estimate includes a contingency line of at least 15 to 20% for well-understood projects and 25 to 35% for projects with novel technology or unclear requirements. Scope creep exposure is visible when requirements are documented at a high level without decomposition into specific deliverables. Unclear requirements are the single most reliable predictor of estimation failure, because ambiguity at the requirements stage compounds at every subsequent phase.

Verifying estimation accuracy and ensuring continuous improvement

Once pitfalls are addressed, validating accuracy and building a feedback loop ensures each project makes your next estimate more reliable.

- Compare estimates against actuals. After each project phase, document the variance between estimated and actual effort. Patterns in variance reveal systematic biases in your process.

- Apply Earned Value Management (EVM). EVM for ongoing tracking provides objective metrics, including Schedule Performance Index and Cost Performance Index, that quantify estimation accuracy in real time.

- Solicit structured team feedback. After delivery, run a retrospective focused specifically on estimation quality. Ask what was consistently over or under estimated and why.

- Document lessons learned formally. Informal knowledge stays with individuals. Documented lessons become organizational assets that improve future estimates across teams.

- Update your estimation templates and benchmarks. Incorporate lessons into the standard process so improvements are systematic, not dependent on individual memory.

Iterative updating is essential as scope clarifies. An estimate produced at project initiation should be treated as a rough order of magnitude. As requirements solidify through discovery and design phases, estimates must be revised. Treating an initial estimate as a fixed commitment is one of the most common and damaging mistakes in project management.

AI and ML tools are increasingly used for estimation support, with AI/ML improving accuracy by 19% on average in 2026. However, these tools require human validation because they depend on the quality of historical training data and cannot account for organizational context, team dynamics, or novel technical challenges. Structured estimation workshops remain the most reliable mechanism for surfacing team knowledge and building estimation consensus that stakeholders can trust.

Pro Tip: Establish a formal after-action review for every project, even short ones. A consistent record of estimated versus actual effort is the most valuable asset you can build for long-term estimation accuracy.

A pragmatic take: Iteration beats perfection

Perfect estimates don't exist in software development, and organizations that pursue them often create more dysfunction than the imprecision they're trying to eliminate. The pressure to produce a single accurate number before work begins ignores the fundamental reality that requirements evolve, technology surprises teams, and people are not interchangeable resources.

What years of estimation reviews reveal is that the teams with the best track records aren't the ones with the most sophisticated models. They're the ones that review consistently, document honestly, and adjust without ego. The most common expert trap is over-focusing on the precision of the number while underinvesting in stakeholder communication about what the number assumes and where it could shift.

Top-down pressure to "just be accurate" backfires unless it's paired with a genuine feedback culture where variances are analyzed rather than blamed. Treat every estimation workshop and review cycle as a skill-building opportunity. The process improves when teams learn from it, not when they're pressured to perform it perfectly from the start.

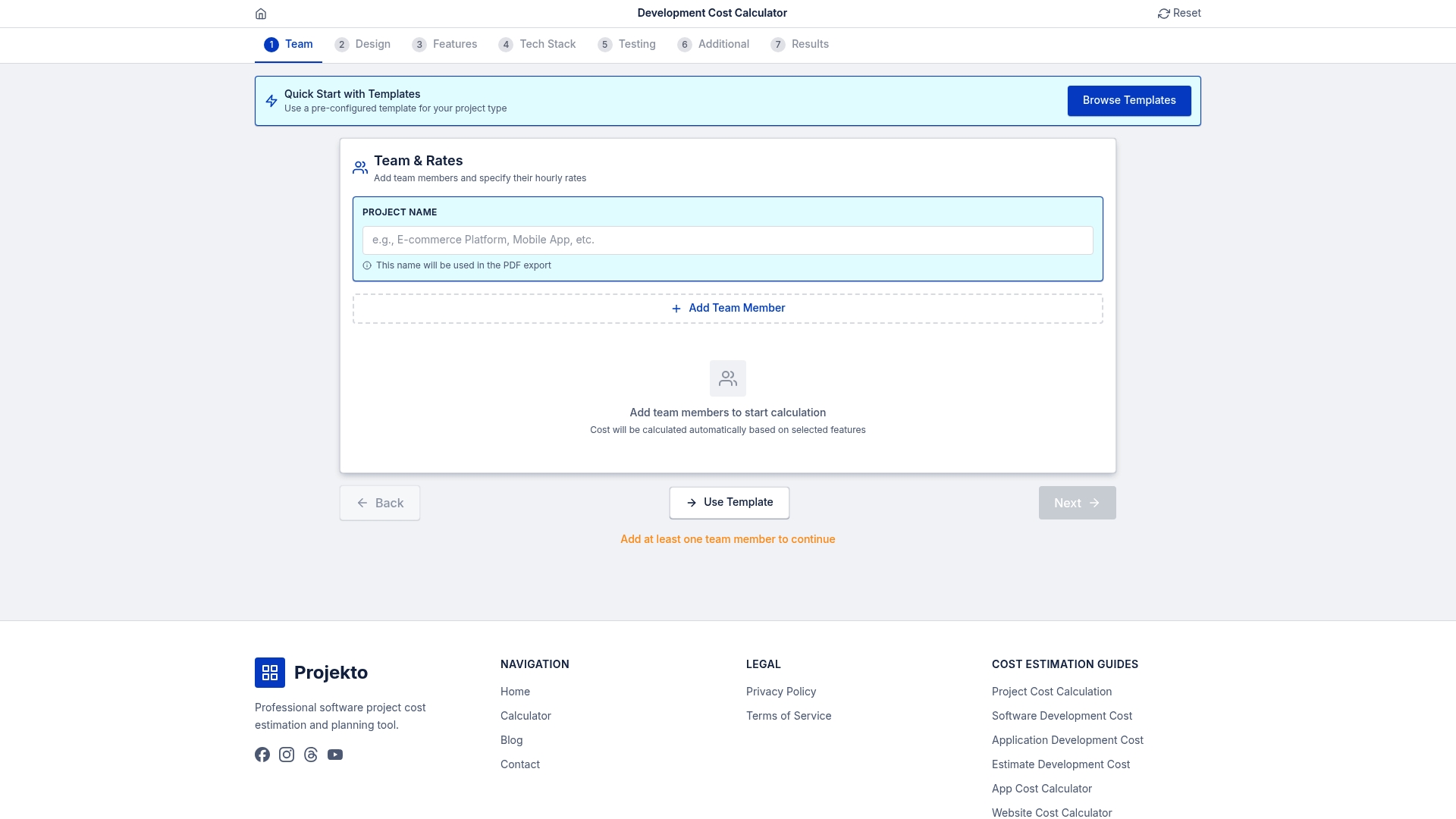

Estimate with confidence using the right tools

A structured review process significantly reduces estimation risk, but the right tools make execution faster and more defensible. Purpose-built calculators remove the manual work of scenario modeling and recalculation, letting your team focus on analysis rather than arithmetic.

The development cost calculator at Projecto provides structured estimation inputs for web and mobile app projects, giving decision-makers a reliable baseline to review and refine. For domain-specific projects, the event app estimator and healthcare app estimator offer pre-configured frameworks that account for industry-specific complexity. Use these tools to generate defensible starting points that your review process can then validate and adjust with confidence.

Frequently asked questions

What is the most accurate project estimation method?

Hybrid methods that combine algorithmic baselines with expert judgment consistently yield the best accuracy for software projects, balancing defensibility with practical context.

How often should project estimates be updated?

Estimates should be revised iteratively as scope clarifies, with formal revisions at each major project milestone rather than treated as a one-time deliverable.

What are red flags for flawed project estimations?

Missing contingency buffer, unclear or high-level requirements, and no non-coding effort included in the breakdown are the most reliable warning signs of an incomplete estimate.

How does AI/ML help in project estimation?

AI and ML tools can improve estimation accuracy by 19% on average, but outputs must be validated by experienced team members who can account for organizational and contextual factors the model cannot capture.

Recommended

- Estimation consensus: align your team for accurate planning

- Understanding project estimators: key to accurate app budgets

- Why estimation fails: common pitfalls in software projects

- Validate time estimates to improve project outcomes

- Evaluating AC Repairs, Installs, and Maintenance Estimates Home Therapist Cooling, Heating, and Plumbing