Most software projects don't fail because of bad code. They fail because of bad estimates that nobody questioned. 60–80% of software projects overrun their original time estimates by an average of 27%, yet most organizations treat the act of generating an estimate as the finish line. It isn't. Validation, the practice of comparing estimated time against actual results and feeding that data back into future planning cycles, is what separates teams that consistently deliver from those that consistently apologize. This article breaks down why validation matters, how to implement it, and what measurable improvements leading teams have documented.

Table of Contents

- The hidden cost of unvalidated estimates

- Why teams consistently get estimates wrong: Biases and blind spots

- How validation works: From estimates to actionable benchmarks

- Frameworks and techniques for more reliable estimates

- Limits and edge cases: Where validation is essential

- Driving stakeholder trust through transparent estimation

- Improve your estimates with a purpose-built calculator

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Validation reduces overruns | Double-checking time estimates consistently leads to lower project delays. |

| Counters cognitive bias | Validation directly addresses optimistic guesses and estimates rooted in past patterns. |

| Boosts stakeholder trust | Sharing validated, evidence-based estimates builds confidence among business sponsors. |

| Works best with data | Calibration improves most when teams analyze actuals versus estimates across multiple projects. |

The hidden cost of unvalidated estimates

The numbers are stark. Only 35% of software projects finish on time and on budget, according to the CHAOS Report. That means nearly two out of three projects are delivering bad news to stakeholders, and most of those overruns trace back to estimates that were never stress-tested against reality.

The consequences of skipping validation extend well beyond a missed deadline. When estimates are wrong and nobody catches it early, the downstream effects compound quickly:

- Missed delivery commitments that damage client and executive trust

- Budget overruns that force scope cuts or emergency funding requests

- Team burnout from sustained crunch caused by unrealistic timelines

- Blame cycles where project managers and developers point fingers instead of solving problems

- Reduced credibility for future estimates, making stakeholder buy-in harder to secure

The root causes are well-documented. Teams skip post-project analysis, ignore historical data, and rely on gut feel rather than calibrated benchmarks. These are not random failures. They are predictable outcomes of common estimation pitfalls that validation directly addresses.

"The average cost overrun for software projects is not a rounding error. It is a structural problem rooted in how estimates are created and never revisited."

Now that you understand the scale and price of estimation mistakes, let's examine the behavioral science behind these failures.

Why teams consistently get estimates wrong: Biases and blind spots

Cognitive bias is not a soft concept. In project estimation, it has hard financial consequences. Three biases account for the majority of estimation failures:

- Planning fallacy: Teams focus on best-case scenarios and ignore historical evidence of similar tasks taking longer.

- Optimism bias: Individuals systematically underestimate task duration, especially for work they find familiar.

- Anchoring: The first estimate given in a meeting tends to anchor all subsequent discussion, even when that number was a rough guess.

These biases don't cancel each other out. They compound. Validation counters planning fallacy, optimism bias, and anchoring by forcing teams to confront the gap between what they predicted and what actually happened.

Unchallenged estimates also create a political problem. When a project overruns and no validation process exists, there is no shared data to explain why. That vacuum fills with blame. Structured validation replaces blame with evidence, which is why aligning on estimates through a documented process is a governance issue, not just a technical one.

"Estimation bias is not a character flaw. It is a systems problem. Fix the system, and the estimates improve."

Pro Tip: Before your next sprint planning session, ask each team member to estimate independently before any group discussion. This simple step reduces anchoring bias and surfaces a wider range of perspectives before the group converges on a number.

Understanding software sizing and estimation as a structured discipline, rather than an informal exercise, is the first step toward building a bias-resistant process. With those behavioral hurdles in mind, let's see exactly how data-backed validation works to anchor estimates in reality.

How validation works: From estimates to actionable benchmarks

Validation is not a one-time audit. It is a continuous feedback loop built into your delivery process. Here is how high-performing teams implement it:

- Record estimates at the task level before work begins, not just at the project level.

- Track actuals in real time using time-logging tools or sprint tracking software.

- Compare estimated vs. actual at the end of each sprint or task completion.

- Calculate variance (actual minus estimated) and categorize by task type, team, and complexity.

- Feed variance data into the next estimation cycle as a calibration input.

- Build historical baselines by task type so future estimates start from evidence, not intuition.

The payoff is significant. Organizations validating time estimates achieve 40–60% improvement in accuracy and reduce large overruns by over 60%. That is not a marginal gain. It is a structural shift in how predictable your delivery pipeline becomes.

| Task type | Average estimated hours | Average actual hours | Variance |

|---|---|---|---|

| Frontend UI component | 8 | 11 | +38% |

| API integration | 12 | 16 | +33% |

| Database schema design | 6 | 7 | +17% |

| QA and testing cycle | 10 | 15 | +50% |

| Deployment and DevOps | 5 | 6 | +20% |

A table like this, built from your own project cost calculations and actuals, becomes a living reference that removes guesswork from future planning. Use tools to track actuals vs. estimates to automate this data collection and reduce the administrative burden on your team.

Pro Tip: Start your validation practice with just three task types that recur most often in your projects. Building a small, accurate baseline beats maintaining a large, unreliable one. Expand the baseline as your data matures.

Having understood the mechanisms and impact of validation, let's look at the leading frameworks and real-world strategies to apply for estimation improvement.

Frameworks and techniques for more reliable estimates

No single technique works for every team or project type. The most effective approach combines methods that address different sources of estimation error. Techniques like three-point estimation, reference class forecasting, and planning poker improve accuracy and create natural validation checkpoints.

| Technique | Best for | Key strength | Validation mechanism |

|---|---|---|---|

| Three-point (PERT) | Tasks with uncertainty | Produces realistic ranges | Compares range to actuals |

| Reference class forecasting | Projects with historical data | Anchors in real outcomes | Uses past projects as baseline |

| Planning poker | Agile sprint planning | Surfaces team disagreement | Consensus reveals assumptions |

| Retrospective auditing | Post-sprint or post-project | Tracks variance over time | Directly feeds calibration loop |

Three-point estimation, also called PERT (Program Evaluation and Review Technique), asks teams to provide three values: optimistic, most likely, and pessimistic. The weighted average of these three produces a more realistic estimate than a single-point guess. Reference class forecasting goes further by anchoring estimates in data from similar completed projects rather than relying on the current team's intuition.

Planning poker is particularly effective for software estimate techniques in agile environments because it forces every team member to commit to a number simultaneously, preventing anchoring. When estimates diverge significantly, the discussion that follows often surfaces hidden complexity or risk.

Pro Tip: Combine reference class forecasting with retrospective auditing. Use historical data to set your initial estimate range, then audit actuals after delivery to refine your reference class for the next project. This creates a self-improving estimation system.

With these frameworks clarified, it's crucial to understand their real-world strengths and choose the right combination for your team and context.

Limits and edge cases: Where validation is essential

Validation matters most when uncertainty is highest. Early-stage estimates for novel or integration-heavy projects are notoriously unreliable. Unknown unknowns and early-stage estimates can be off by plus or minus 160%, a range so wide that a single-point estimate is nearly meaningless without a validation plan.

Key scenarios where validation is non-negotiable:

- Novel technology stacks: When your team has limited experience with a framework or platform, historical baselines don't exist yet. Build in a 30% or greater buffer and validate aggressively after early sprints.

- Complex third-party integrations: APIs, payment gateways, and external data sources introduce dependencies your team cannot fully control. Estimate conservatively and track actuals from the first integration task.

- Regulatory or compliance-driven projects: Scope changes driven by legal requirements are common and hard to predict. Frequent validation helps catch scope drift before it becomes a budget crisis.

- Large task decomposition: Breaking large tasks into smaller units reduces estimation error significantly. A 40-hour task estimate is far less reliable than four 10-hour task estimates validated individually.

Frequent validation rapidly narrows the cone of uncertainty. Each sprint that produces actuals gives you better data for the next estimate. Teams that validate consistently find that their unknowns in estimation shrink over time as their historical baseline grows.

"The cone of uncertainty is not a fixed property of your project. It is a function of how much validated data you have collected."

A practical grasp of limitations ensures you can prioritize validation where it matters most. Next, let's address how to communicate validated estimates to improve trust and collaboration.

Driving stakeholder trust through transparent estimation

Validated estimates are only valuable if they are communicated clearly. Stakeholders who receive a single-point estimate with no context have no way to assess risk. Stakeholders who receive a validated range with documented assumptions can make informed decisions.

Here is a structured approach to transparent estimation communication:

- Present ranges, not single points. Share P50 (50% confidence) and P75 (75% confidence) estimates so stakeholders understand the probability distribution behind the number.

- Document all assumptions explicitly. Every estimate rests on assumptions about scope, team capacity, and dependencies. Write them down and share them.

- Communicate known risks and their potential impact on the timeline before the project starts, not after a delay occurs.

- Involve stakeholders in validation reviews. When key stakeholders participate in retrospective audits, they develop a realistic understanding of estimation complexity and are less likely to treat overruns as failures of effort.

- Use audits for improvement, not accountability. Frame validation reviews as learning exercises. Teams that fear blame hide problems. Teams that expect learning surface them early.

Transparency in communicating assumptions, risks, and validated estimates builds stakeholder trust and reduces finger-pointing when timelines shift. This approach also creates a shared language between technical teams and business stakeholders, which is one of the most durable benefits of a mature estimation consensus strategy.

"Stakeholders don't expect perfection. They expect honesty about uncertainty and a credible plan for managing it."

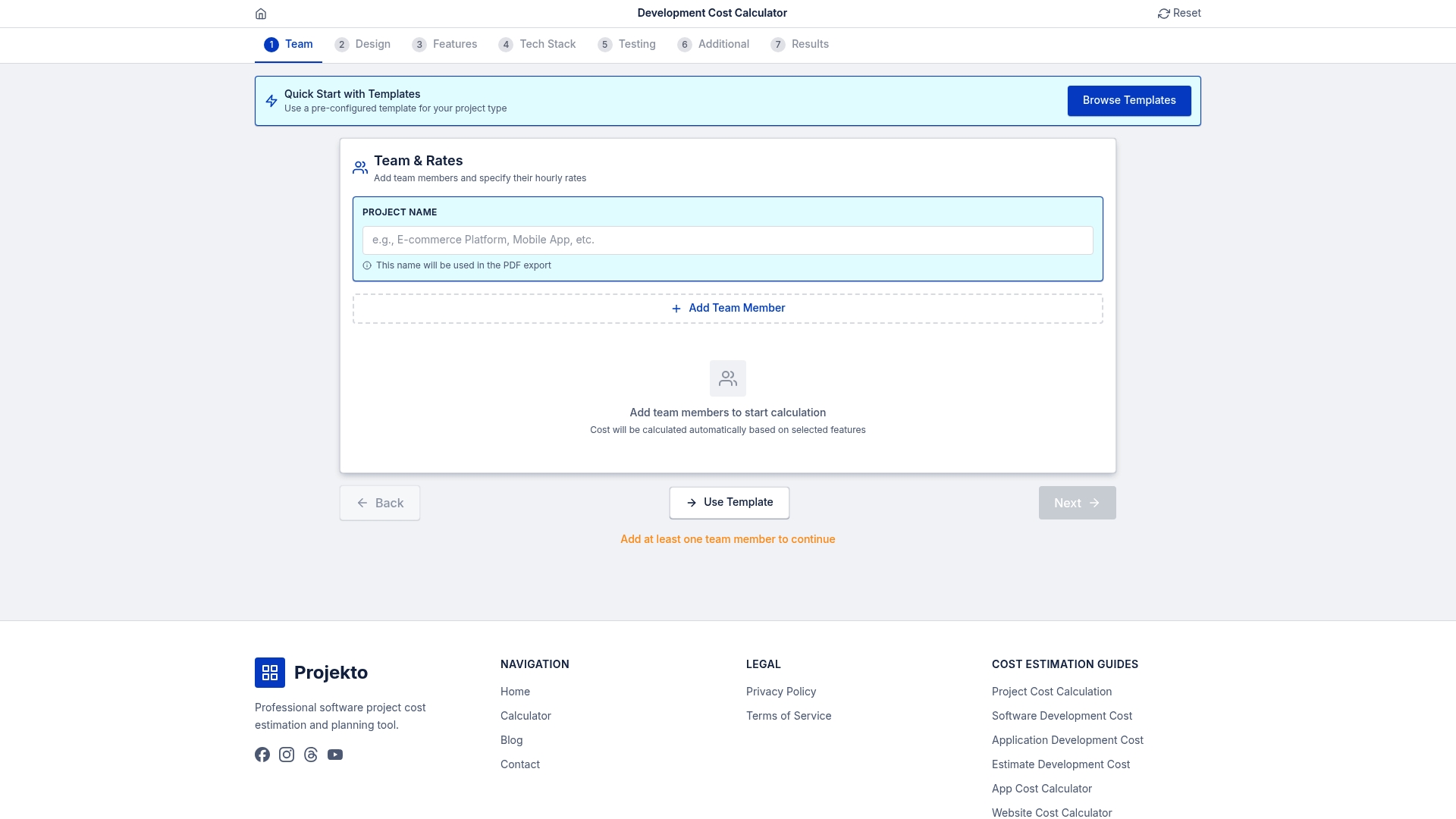

Improve your estimates with a purpose-built calculator

Building a validation-ready estimation process starts with having accurate initial estimates to validate against. Without a structured starting point, even the best feedback loop has nothing reliable to calibrate.

The Projecto Calculator gives project managers and business stakeholders a structured, data-driven foundation for web and mobile app development estimates. It accounts for feature complexity, team composition, and technology stack to produce ranges you can actually defend in a stakeholder meeting. Pair it with the validation practices outlined in this article, and you have a complete system: credible initial estimates, continuous calibration, and a growing historical baseline that makes every future project more predictable. Start with a reliable estimate, then validate your way to consistent delivery.

Frequently asked questions

What does it mean to validate a time estimate?

Validating a time estimate means comparing your predicted duration to the actual time recorded after task or sprint completion, then using that variance data to refine the models and assumptions driving future estimates.

How often should time estimates be validated?

Continuous calibration after each sprint or key task completion is considered best practice, because it catches misalignments quickly before they compound across multiple delivery cycles.

What validation method works best for software teams?

Combining three-point estimates, reference class forecasting, and frequent retrospectives typically yields the highest accuracy, because each method addresses a different source of estimation error.

Can AI and machine learning improve estimate validation?

AI and ML reduce estimation errors significantly when sufficient historical data exists, with some models achieving story point prediction errors as low as MAE 0.68 to 0.70 on well-documented codebases.

Are there projects where validating time estimates is less useful?

For high-trust, flow-driven teams using NoEstimates approaches, tracking cycle time and throughput can sometimes replace detailed estimate validation, though this works best in mature teams with stable, well-understood workflows.