TL;DR:

- Choosing the right estimation framework depends on project size, data availability, and accuracy needs.

- Algorithmic models are scalable and defensible but require accurate inputs and calibration.

- Combining methods like expert judgment with structured techniques improves estimation reliability and accounts for uncertainties.

Selecting the wrong cost estimation framework for a web or mobile app project can derail budgets before a single line of code is written. Business owners and project managers face a genuinely difficult decision: dozens of frameworks exist, each built for different project conditions, team structures, and accuracy requirements. Some models demand years of historical data. Others rely on expert consensus that can drift toward optimism. This guide breaks down the top cost estimation frameworks, compares their strengths and limitations, and offers practical selection advice so you can match the right method to your specific project context.

Table of Contents

- Key criteria for choosing a cost estimation framework

- Algorithmic frameworks: COCOMO II, Function Point Analysis, and parametric methods

- Expert judgment and consensus methods: Planning Poker, Wideband Delphi, and hybrid approaches

- Bottom-up, top-down, and three-point (PERT) estimation: when and how to use

- The uncomfortable truth about estimation: why no single framework works for every app project

- Take your next step with accurate app project estimation

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Estimation is multidimensional | Successful projects use a mix of frameworks, not just one approach. |

| Buffer for unknowns | Always add 10-20% extra to your estimates for unexpected factors. |

| Bias is a hidden risk | Expert methods can be fast but are susceptible to optimism and anchoring bias. |

| Combine methods for contracts | Algorithmic models are defensible for contracts but more accurate when combined with expert and consensus input. |

| Iterate and review | Keep refining estimates throughout your project to reduce risk and improve budget accuracy. |

Key criteria for choosing a cost estimation framework

Before evaluating any specific framework, you need to assess the conditions your project actually operates under. Choosing a method without this groundwork is a common source of software estimation mistakes that inflate budgets or create unrealistic delivery timelines.

Here are the core criteria to evaluate:

- Project size and complexity. Small MVPs with a handful of features behave very differently from enterprise platforms with dozens of integrations. Larger, more complex projects justify the overhead of rigorous algorithmic methods.

- Available historical data. Algorithmic models need historical data for calibration and can overestimate costs without it. If your organization lacks past project metrics, expert-driven methods are a safer starting point.

- Team expertise and process maturity. A team running mature agile sprints can leverage Planning Poker effectively. A team new to structured estimation may need top-down guidance before attempting granular bottom-up breakdowns.

- Desired speed versus accuracy. Fast ballpark estimates for investor pitches require different tools than binding contract figures. Aligning your accuracy target to the estimate's purpose prevents over-engineering the process.

- Regulatory and contract requirements. Fixed-price contracts or government procurement often demand defensible, auditable estimates. Algorithmic frameworks produce documented, traceable outputs that hold up under scrutiny.

- Stakeholder alignment needs. When multiple teams or vendors are involved, achieving estimation consensus becomes a process requirement, not just a nice-to-have.

Pro Tip: Regardless of which framework you select, always build in a 10 to 20% buffer for unknowns. Edge cases, scope clarifications, and third-party API surprises are routine in app development, and no framework fully accounts for them at the outset.

Think of these criteria as a filter, not a checklist. You are narrowing down which frameworks are even viable for your situation before comparing them on merit.

Algorithmic frameworks: COCOMO II, Function Point Analysis, and parametric methods

Having clarified the critical evaluation criteria, we move to the algorithmic frameworks that underpin most large-scale cost estimates. These methods use quantitative formulas, project size metrics, and calibrated cost drivers to produce structured outputs.

COCOMO II (Constructive Cost Model II) is the most widely referenced algorithmic framework in software engineering. COCOMO II uses source lines of code, 17 cost drivers, and scaling factors to calculate effort in person-months. The model accounts for variables like team capability, platform complexity, and required reliability. It is most reliable when your organization has calibrated it against past projects of similar type and scale.

Function Point Analysis (FPA) shifts the measurement unit from code to functionality. Instead of counting lines, you count inputs, outputs, queries, files, and interfaces, then adjust for technical complexity. FPA is language-independent, which makes it useful for cross-platform estimates where the same feature set might be built in different environments.

Parametric estimation is a broader category that includes any model using statistical relationships between project parameters and cost. Both COCOMO II and FPA are technically parametric, but the term also covers commercial tools and custom regression models built from proprietary project databases.

| Framework | Primary input | Best for | Key limitation |

|---|---|---|---|

| COCOMO II | Source lines of code | Large, well-defined projects | Requires calibration data |

| Function Point Analysis | Feature count | Cross-platform estimates | Time-consuming to measure |

| Parametric models | Historical project data | Organizations with data maturity | Garbage-in, garbage-out risk |

The strengths of algorithmic methods are real. They are defensible, scalable, and produce outputs that can be reviewed and audited. For teams looking to calculate development cost on a fixed-price engagement, algorithmic models provide the documentation trail that contract negotiations require.

The drawbacks are equally real. Without accurate inputs, these models can produce estimates that are significantly off from actual outcomes. Poor SLOC projections or miscalibrated cost drivers compound into large errors at the project level.

Pro Tip: Use algorithmic models specifically for contracts or regulatory scenarios where auditability matters. For early-stage scoping, the input precision required often does not yet exist, making these models premature.

Algorithmic frameworks also reward investment in team size estimation, since staffing assumptions feed directly into effort calculations and final cost outputs.

Expert judgment and consensus methods: Planning Poker, Wideband Delphi, and hybrid approaches

After reviewing algorithmic solutions, let's explore how expert-driven and collaborative approaches help when data is scarce or projects are dynamic. These methods leverage the collective knowledge of your development team rather than historical databases.

Planning Poker is the most widely used agile estimation technique. Team members simultaneously reveal card-based estimates for individual user stories, then discuss discrepancies until consensus is reached. The simultaneous reveal prevents anchoring, where the first number heard unconsciously influences everyone else's judgment.

Wideband Delphi is a more structured approach. Experts submit estimates independently, a facilitator aggregates and shares the range anonymously, and the group iterates through multiple rounds until estimates converge. The anonymity reduces social pressure and surfaces genuine disagreement early.

Hybrid approaches combine algorithmic baselines with expert adjustments. A team might use Function Point Analysis to generate an initial estimate, then apply Planning Poker to validate individual feature complexity. This cross-check reduces the blind spots inherent in either method alone.

Here is a structured process for running effective consensus estimation sessions:

- Break all tasks into units smaller than one day of effort before the session begins.

- Provide context documents so all participants share the same understanding of requirements.

- Run at least two rounds of independent estimation before group discussion.

- Flag any item where estimates diverge by more than 2x for dedicated discussion.

- Document the final estimate alongside the key assumptions that drove it.

Expert judgment methods are fast but prone to optimism and anchoring bias. The most common failure mode is a senior engineer's initial estimate anchoring the entire team, even in formats designed to prevent it.

"Large tasks can have ±50% accuracy; break them down into smaller units for finer, more reliable estimates."

Achieving reliable team estimation consensus requires discipline around task decomposition. Teams that skip this step consistently underestimate integration work and testing cycles. Exploring structured team estimation methods can significantly improve the quality of consensus-based outputs.

Bottom-up, top-down, and three-point (PERT) estimation: when and how to use

With expert consensus methods covered, it is crucial to also understand structured estimation techniques that help balance speed and accuracy. These three approaches are often used in combination and serve distinct purposes across the project lifecycle.

Bottom-up estimation starts at the task level. Every feature, integration, and testing cycle is estimated individually, then rolled up into a project total. This method produces the highest accuracy but requires a well-defined scope and significant estimator time.

Top-down estimation works from the overall project scope downward. A project manager uses analogous projects, rough size categories, or industry benchmarks to assign a total budget, then distributes it across workstreams. It is fast and useful for early feasibility checks, but it can miss complexity buried in specific features.

Three-point estimation (PERT) addresses uncertainty directly by requiring three scenarios for each task: optimistic (O), pessimistic (P), and most likely (M). The PERT formula calculates a weighted average: (O + 4M + P) / 6. This produces a statistically grounded estimate that reflects real uncertainty rather than a single-point guess.

Bottom-up estimation is accurate but time-intensive, while top-down is quick but high-level; combining both yields the best results for most app development projects.

| Method | Accuracy | Effort required | Speed | Best project size |

|---|---|---|---|---|

| Bottom-up | High | High | Slow | Large, complex builds |

| Top-down | Low to medium | Low | Fast | Early-stage scoping |

| Three-point (PERT) | Medium to high | Medium | Moderate | High-uncertainty projects |

Here is when to apply each method:

- Use bottom-up when you have a defined feature list and need a defensible budget for stakeholder approval.

- Use top-down when you need a quick ballpark for a pitch deck or initial feasibility discussion.

- Use PERT when the project involves significant technical unknowns, new technology stacks, or regulatory dependencies.

Pro Tip: Run top-down and bottom-up estimates in parallel on complex projects. If the two outputs diverge by more than 30%, that gap signals unresolved scope ambiguity that needs resolution before budgeting.

For teams seeking a structured starting point, a software project cost calculator can anchor the top-down estimate while your team works through the bottom-up detail. Structured estimation workshops are also an effective way to run bottom-up sessions with cross-functional teams.

The uncomfortable truth about estimation: why no single framework works for every app project

Armed with an understanding of various methods, let's confront the realities of estimation that seasoned project leaders know well. Every framework covered in this guide was designed under specific assumptions about project type, team structure, and data availability. Real app projects routinely violate those assumptions.

COCOMO II assumes you can accurately project source lines of code before writing any. Planning Poker assumes your team's collective judgment is well-calibrated and free of social dynamics. PERT assumes you can meaningfully distinguish optimistic from pessimistic scenarios on features that have never been built before.

The uncomfortable reality is that estimation accuracy improves through iteration, not through framework selection alone. Teams that avoid estimation pitfalls treat each estimate as a living document, updated as requirements clarify and technical risks surface.

Project volatility, shifting stakeholder priorities, and team turnover all erode the accuracy of any initial estimate over time. Adding a 10 to 20% buffer for unknowns is not a workaround; it is a professional standard that reflects the inherent uncertainty of software development.

The most effective teams combine methods: use algorithmic models for contract documentation, expert consensus for sprint-level planning, and PERT for high-risk feature sets. Treat the final number as a range with a confidence level, not a fixed commitment.

Take your next step with accurate app project estimation

Understanding which framework fits your project is only the first step. Applying that knowledge to generate a real, actionable estimate requires the right tools calibrated to your project type and scope.

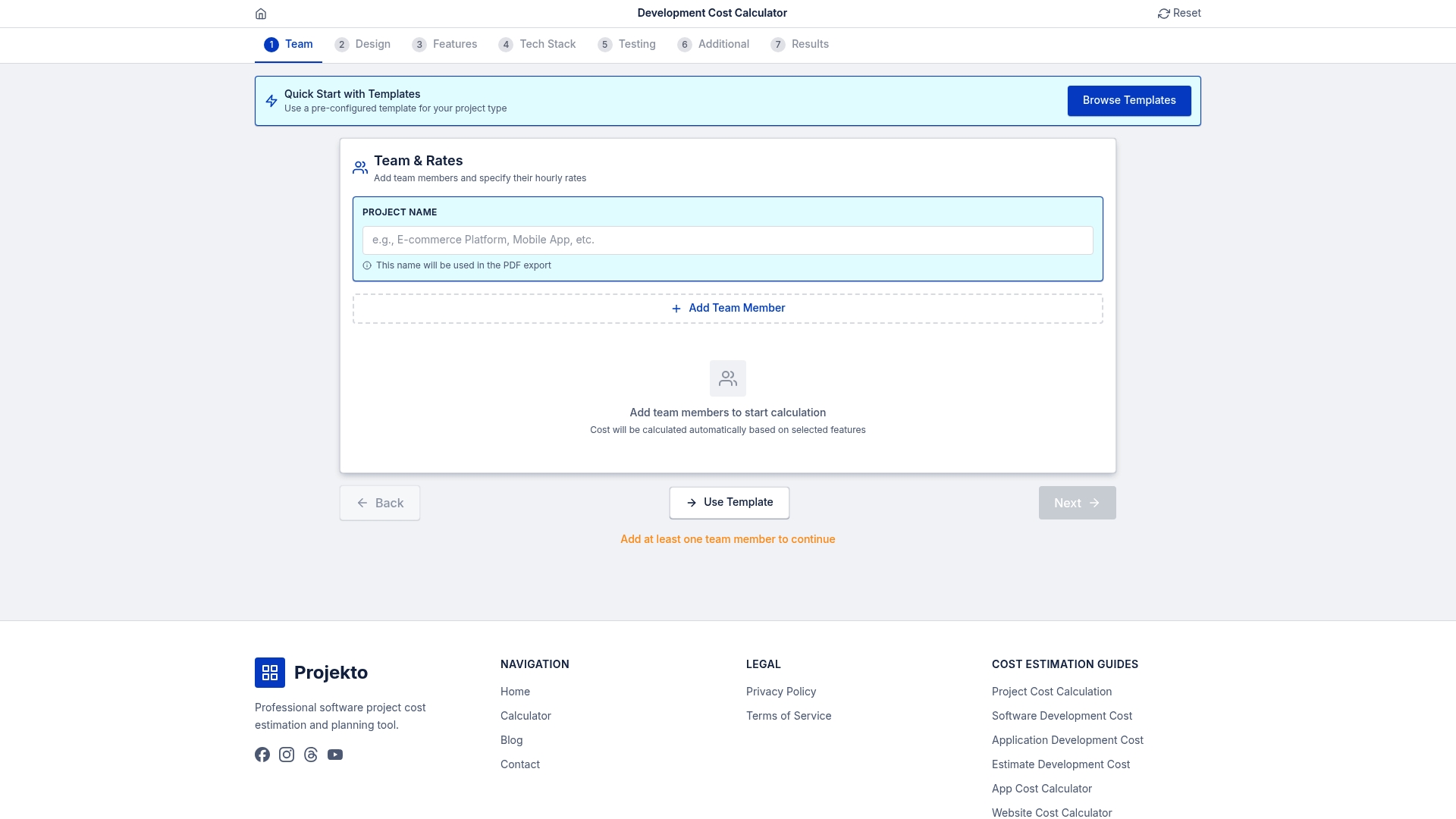

Our development cost calculator lets you input your project parameters and instantly generate structured time and budget estimates grounded in real development data. If you are building in a specific vertical, explore purpose-built estimates for an event management app cost or a healthcare app cost. These resources give you a concrete starting point that you can refine using the frameworks covered in this guide, turning theoretical knowledge into a working project budget.

Frequently asked questions

What is the most accurate cost estimation framework for app development?

Bottom-up delivers the most accuracy for complex projects by totaling individual task estimates, but hybrid approaches that combine algorithmic models with expert judgment consistently outperform any single method.

How can I avoid bias in expert judgment-based estimates?

Use structured consensus methods like Wideband Delphi, decompose tasks into units smaller than one day, and include independent cross-verification from team members not involved in the original estimate. Expert judgment is prone to anchoring bias; structured anonymity helps neutralize it.

Should I add a buffer to my cost estimates?

Yes. Adding a 10 to 20% buffer for unknowns is a professional standard in software development, accounting for edge cases, scope changes, and integration surprises that initial estimates routinely miss.

Are algorithmic models suitable for all project types?

Algorithmic models need historical data for accurate calibration and can overestimate costs on smaller or less-defined projects. They are best reserved for larger engagements where sufficient past project data exists.

How can I use cost estimation frameworks in contract negotiations?

Algorithmic frameworks produce defensible, auditable outputs that hold up under contract scrutiny. Always document your input assumptions and include explicit buffers so there is room for negotiation without compromising your baseline.